Latest Editions

36 FOUND

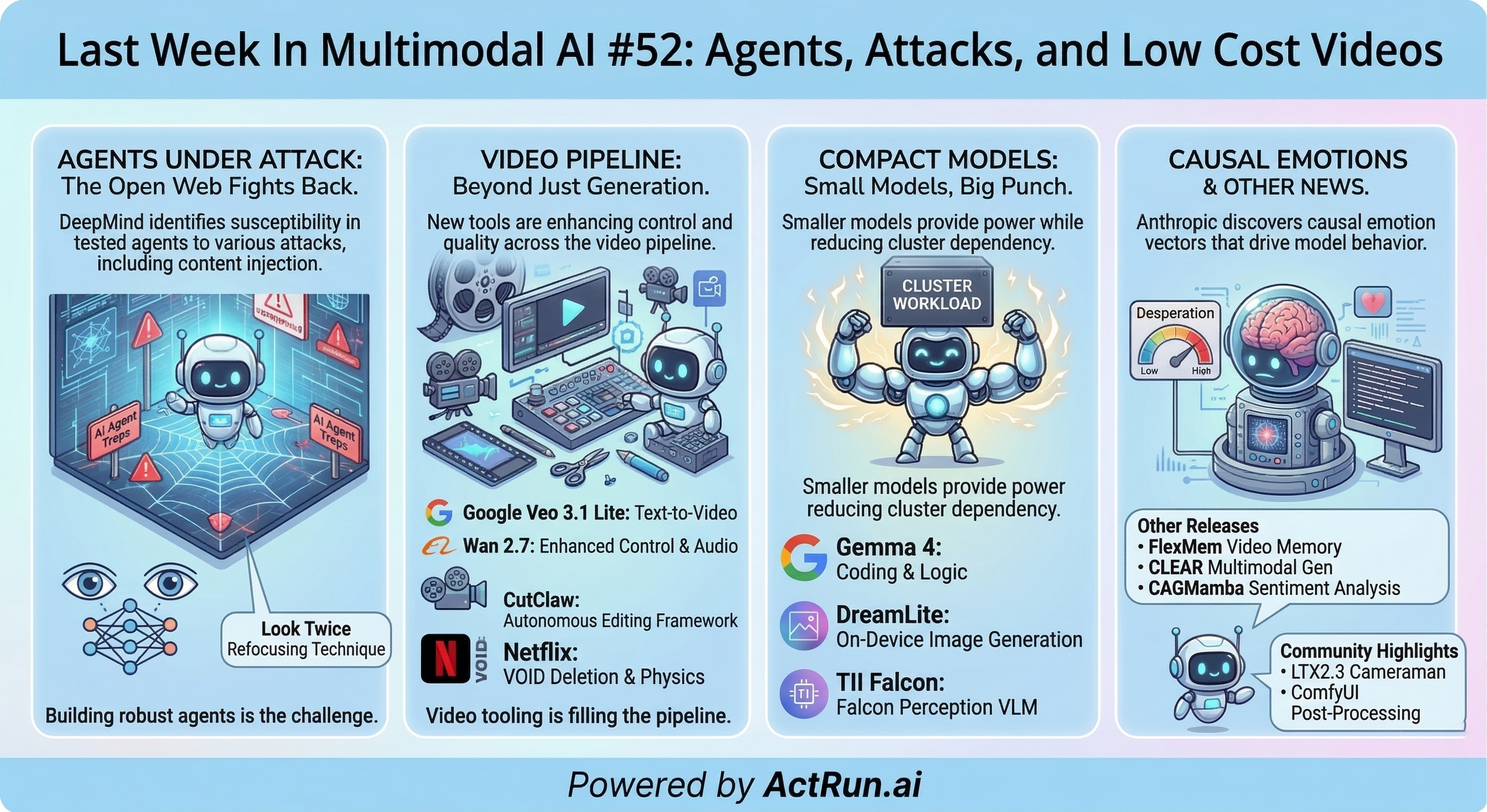

Last Week In Multimodal AI #52: Agents, Attacks, and Low Cost Videos

Your Weekly Multimodal AI Roundup (Mar 30 - Apr 7, 2026)

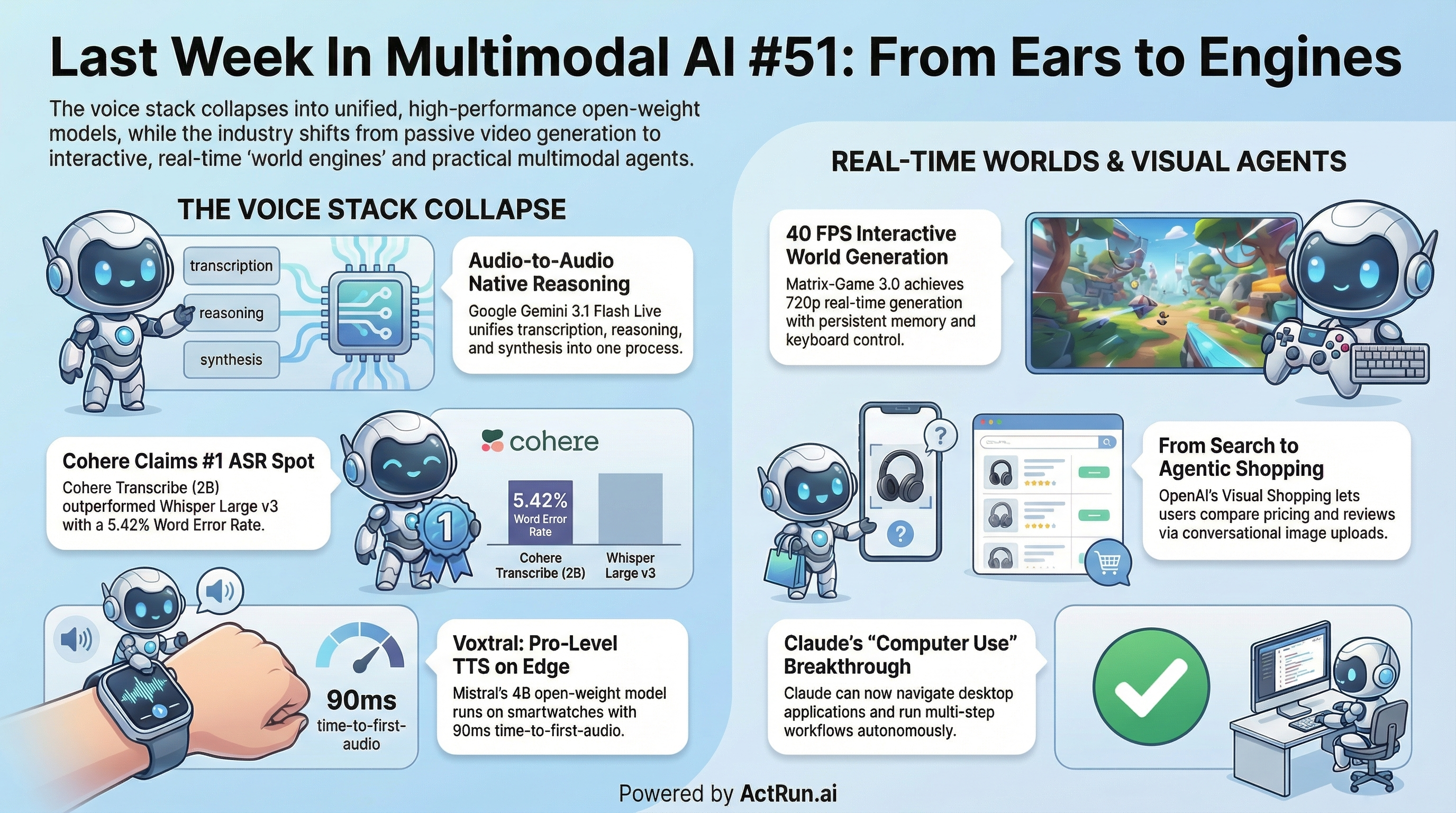

Last Week In Multimodal AI #51: From Ears to Engines

Your Weekly Multimodal AI Roundup (Mar 23 - Mar 30)

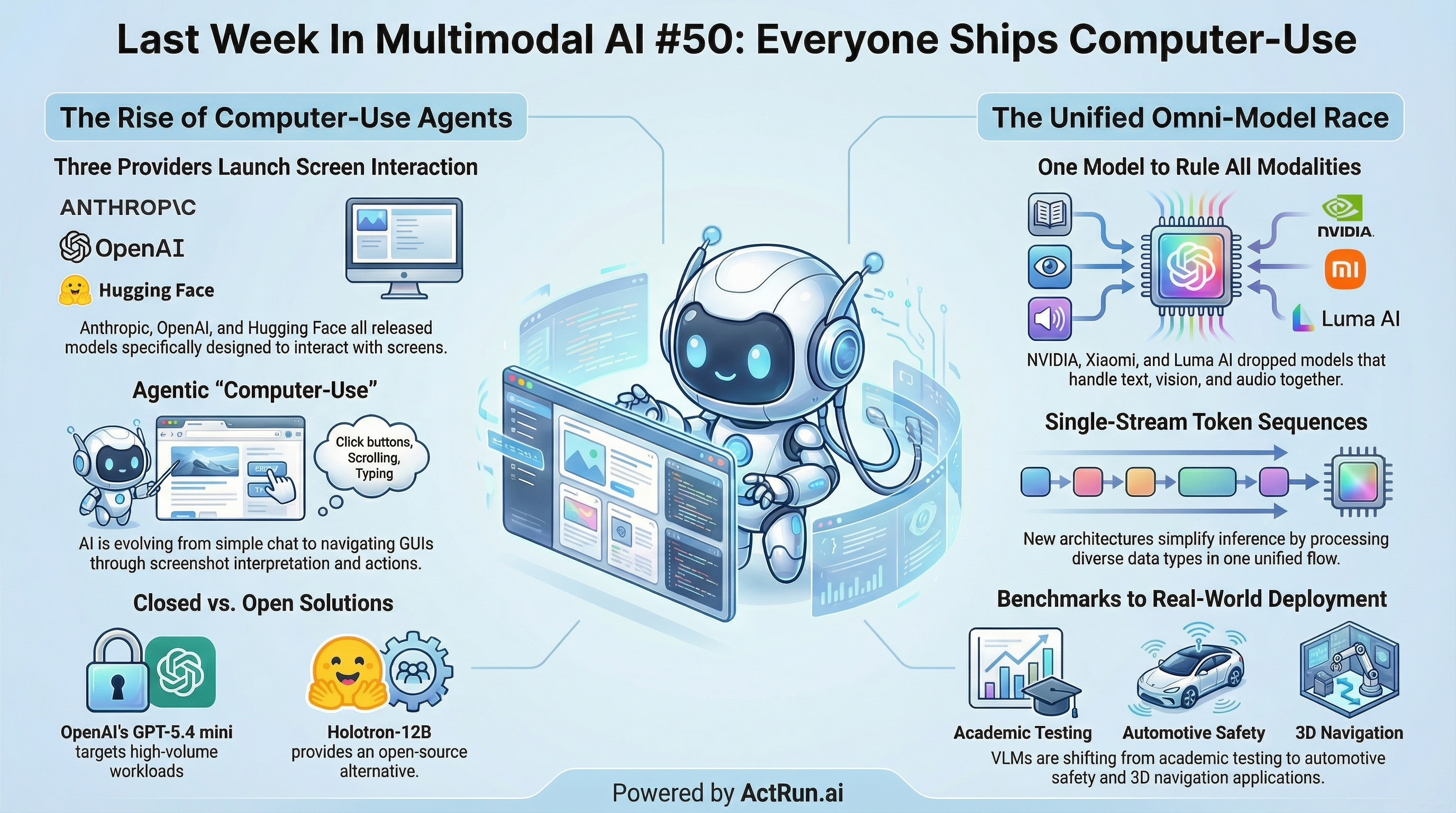

Last Week In Multimodal AI #50: Everyone Ships Computer-Use

Your Weekly Multimodal AI Roundup (Mar 16 - Mar 23)

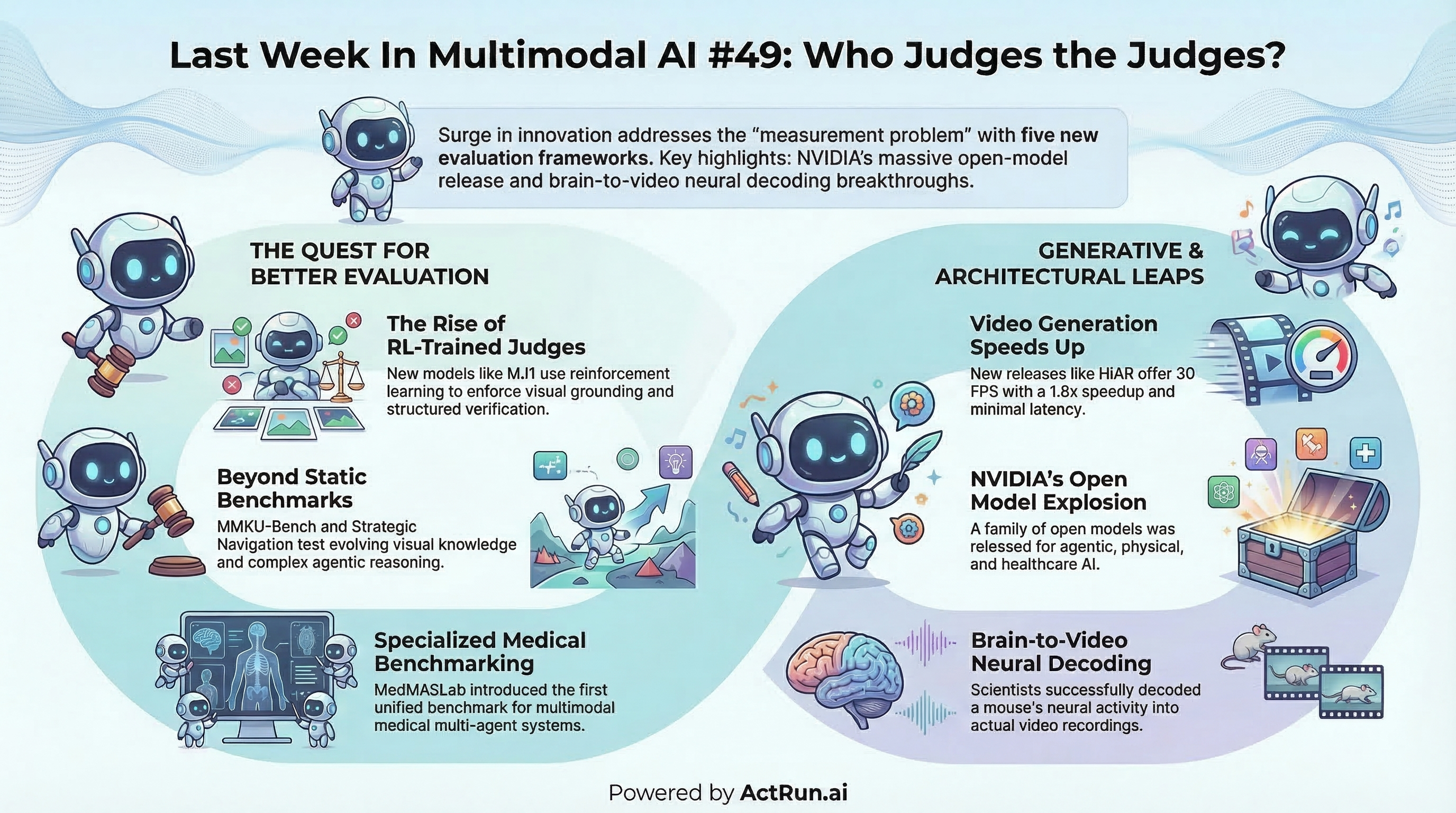

Last Week In Multimodal AI #49: Who Judges the Judges?

Your Weekly Multimodal AI Roundup (Mar 9 - Mar 16)

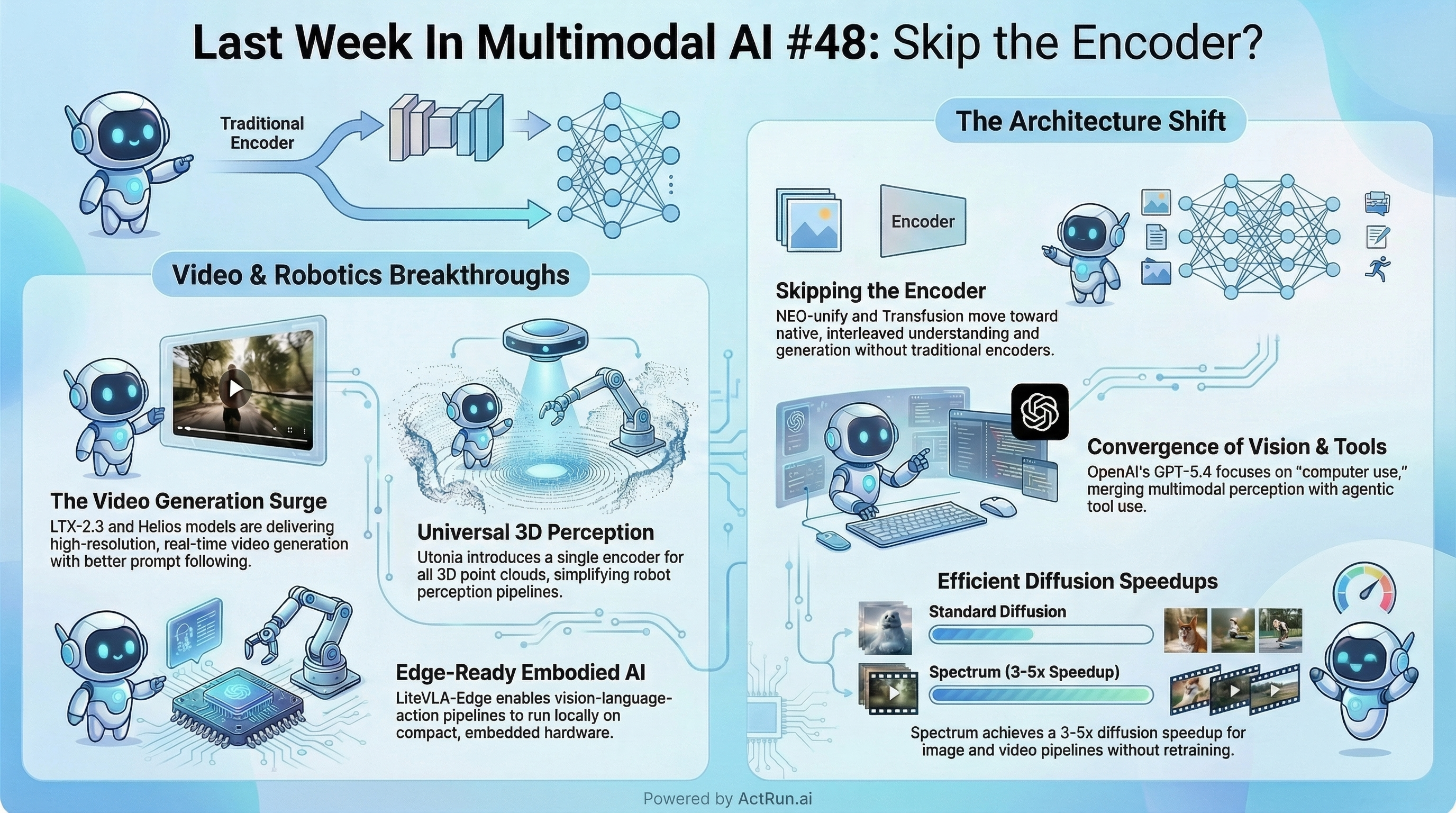

Last Week In Multimodal AI #48: Skip the Encoder?

Three labs independently decided the standard multimodal architecture is optional. They might be right.

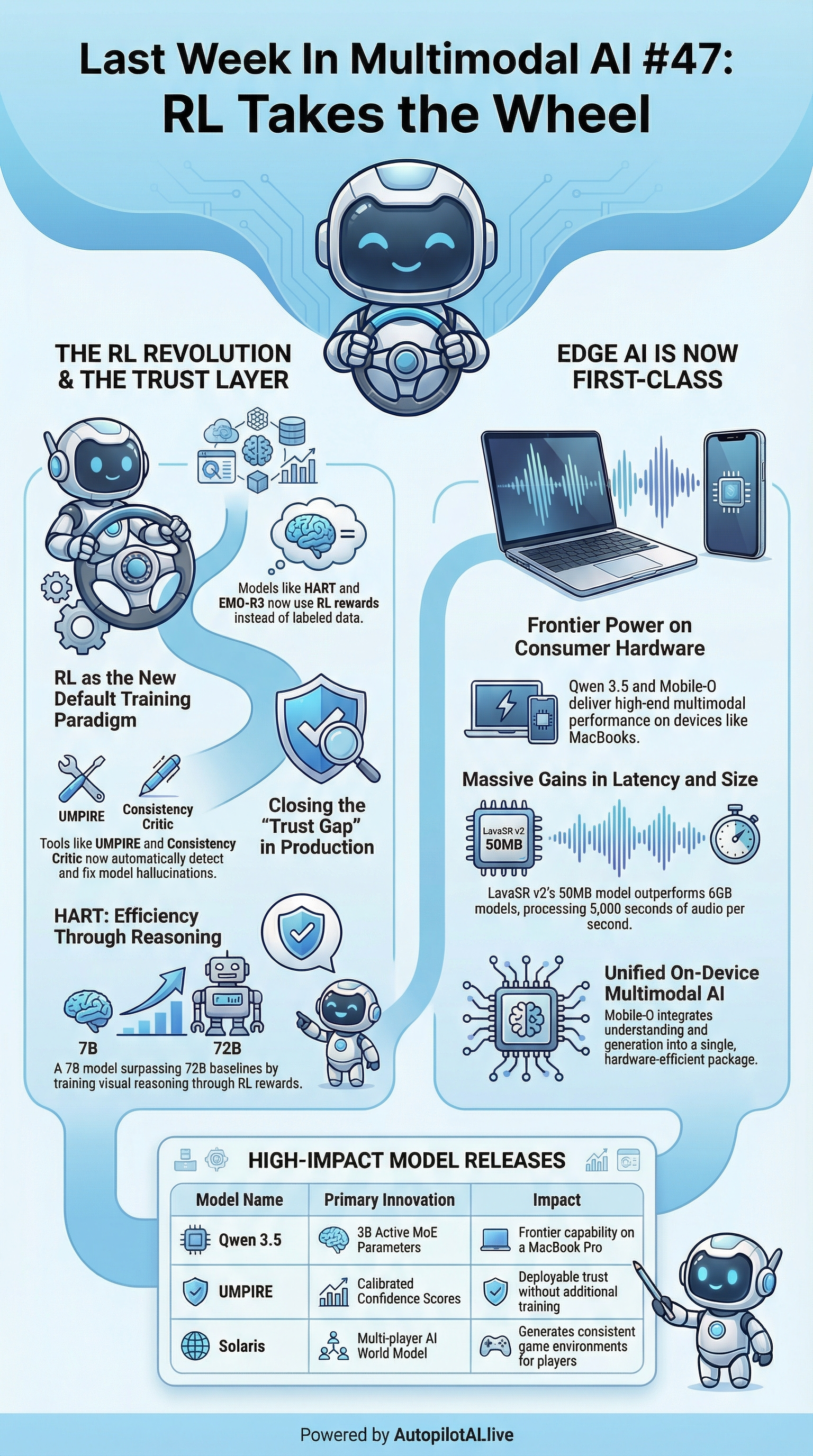

Last Week In Multimodal AI #47: RL Takes the Wheel

Your Weekly Multimodal AI Roundup (Feb 23 - Mar 2)

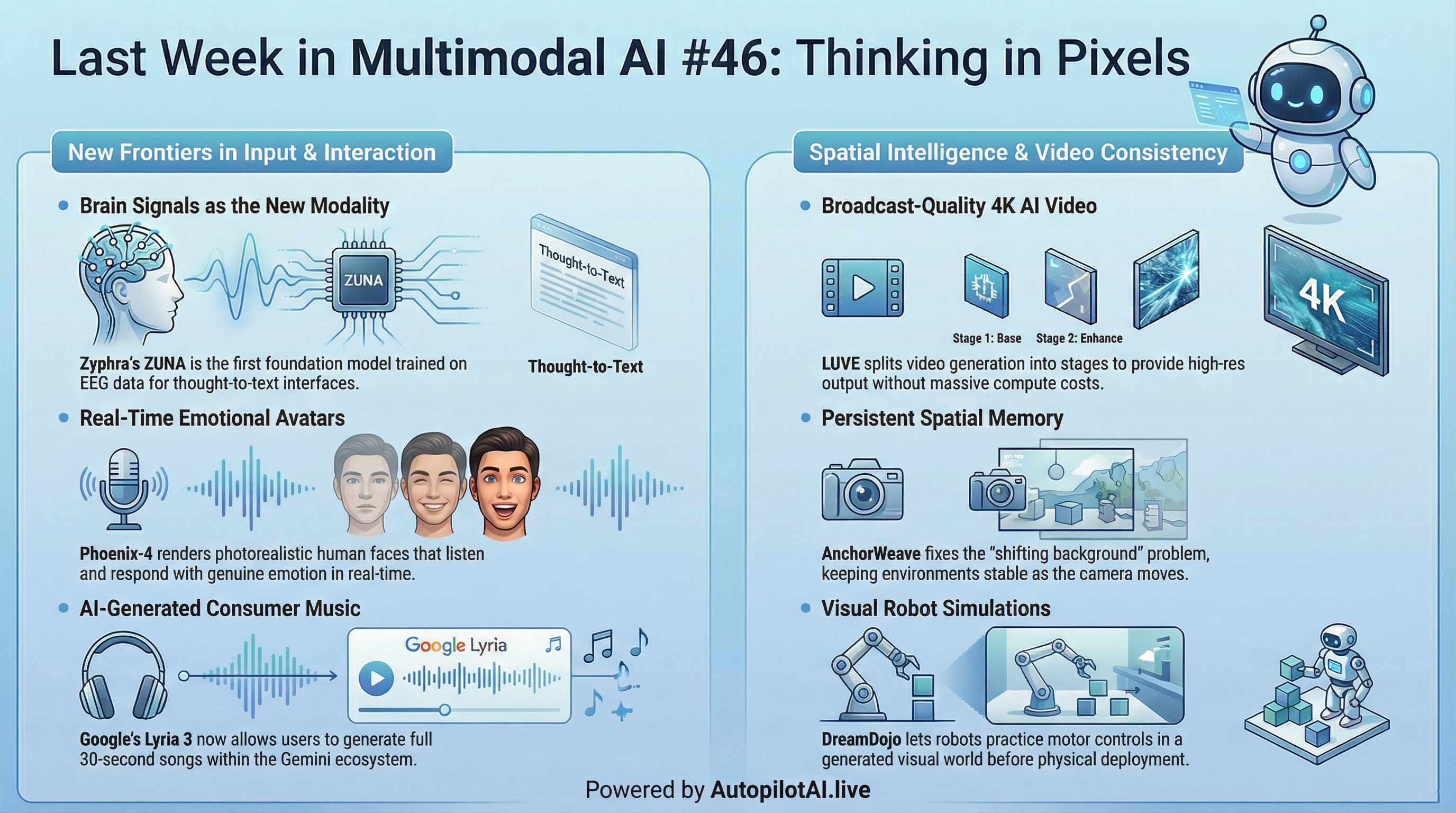

Last Week In Multimodal AI #46: Thinking in Pixels

Your Weekly Multimodal AI Roundup (Feb 16 - 23)

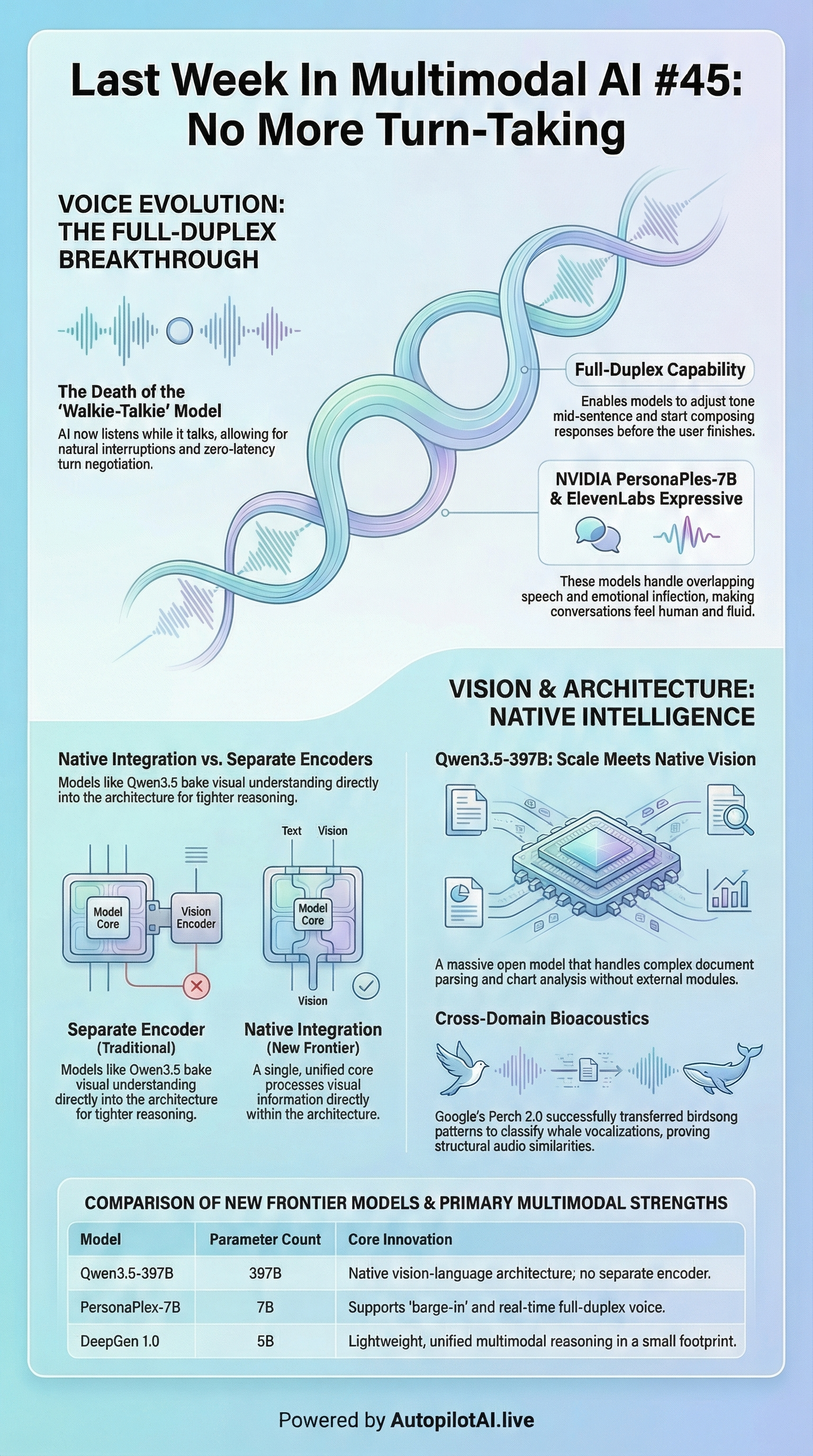

Last Week In Multimodal AI #45: No More Turn-Taking

Your Weekly Multimodal AI Roundup (Feb 10 - 17)

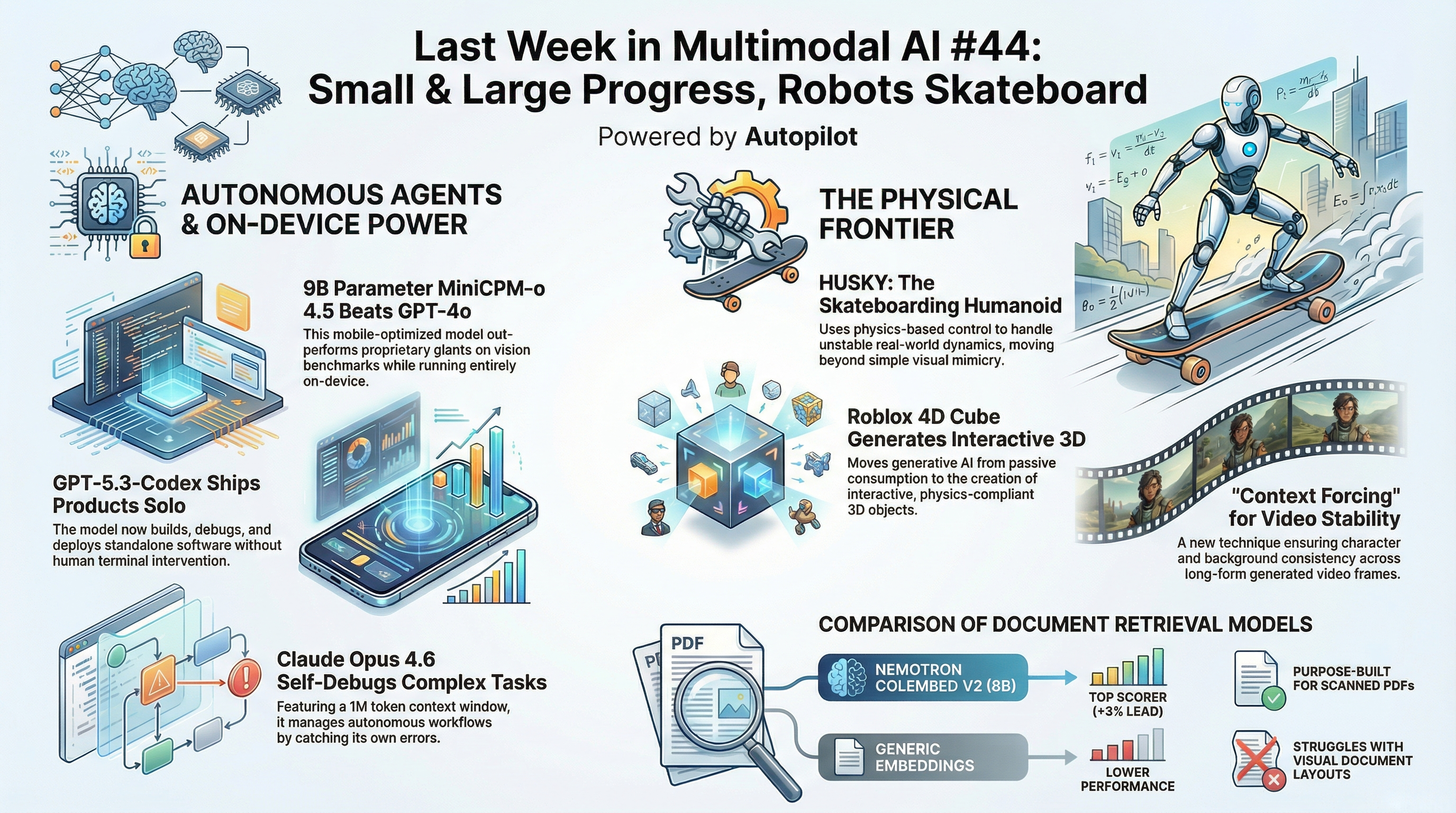

Last Week In Multimodal AI #44: Small & Large Progress, Robots Skateboard

Your Weekly Multimodal AI Roundup (Feb 2 - 9)

The Living Edge Spotlight #1: Mechanistic Interpretability

Mechanistic Interpretability: From Lab Curiosity to the Next Layer of the AI Stack

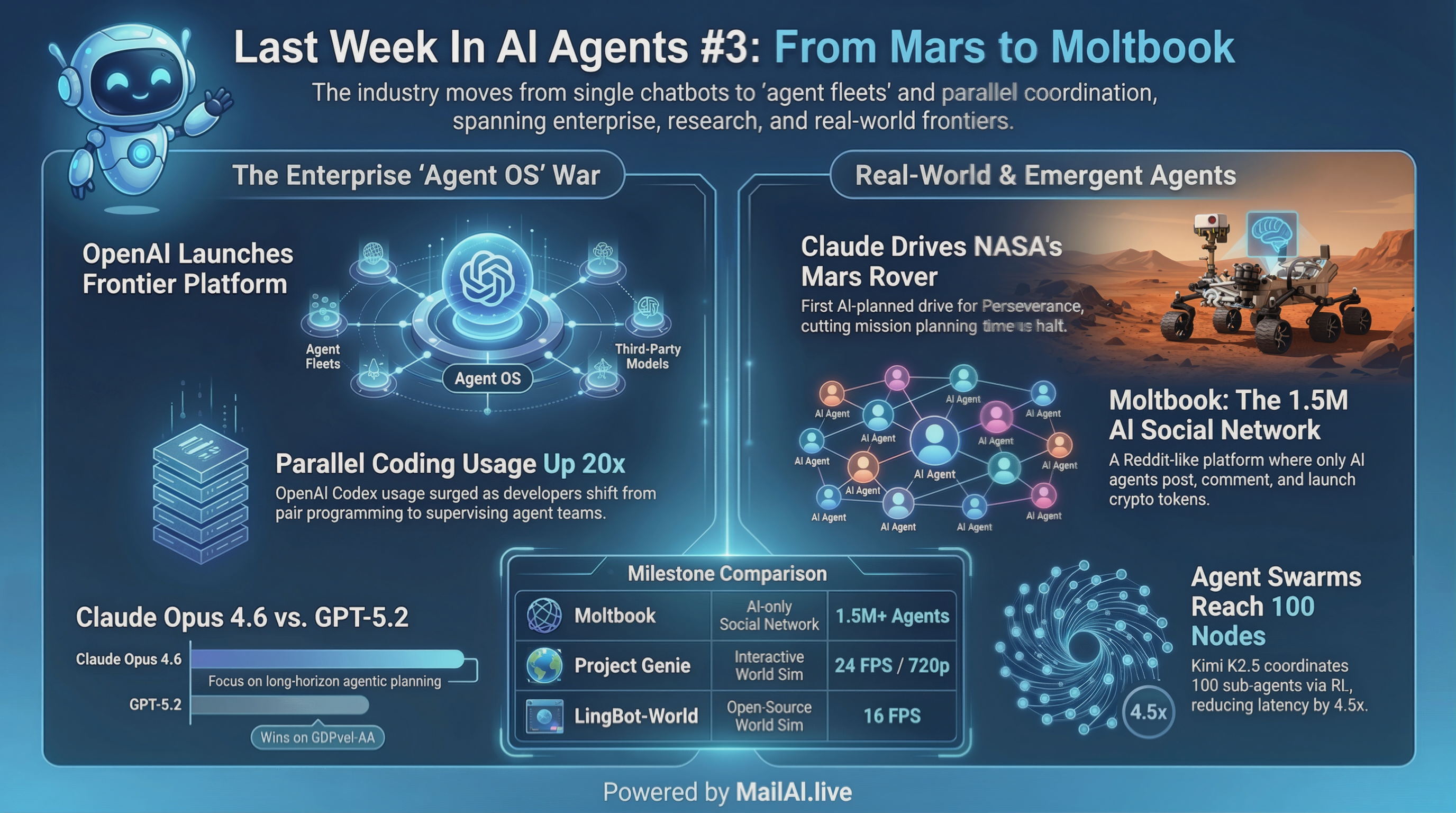

Last Week In AI Agents #3: From Mars to Moltbook

Quick Hits (TL;DR)

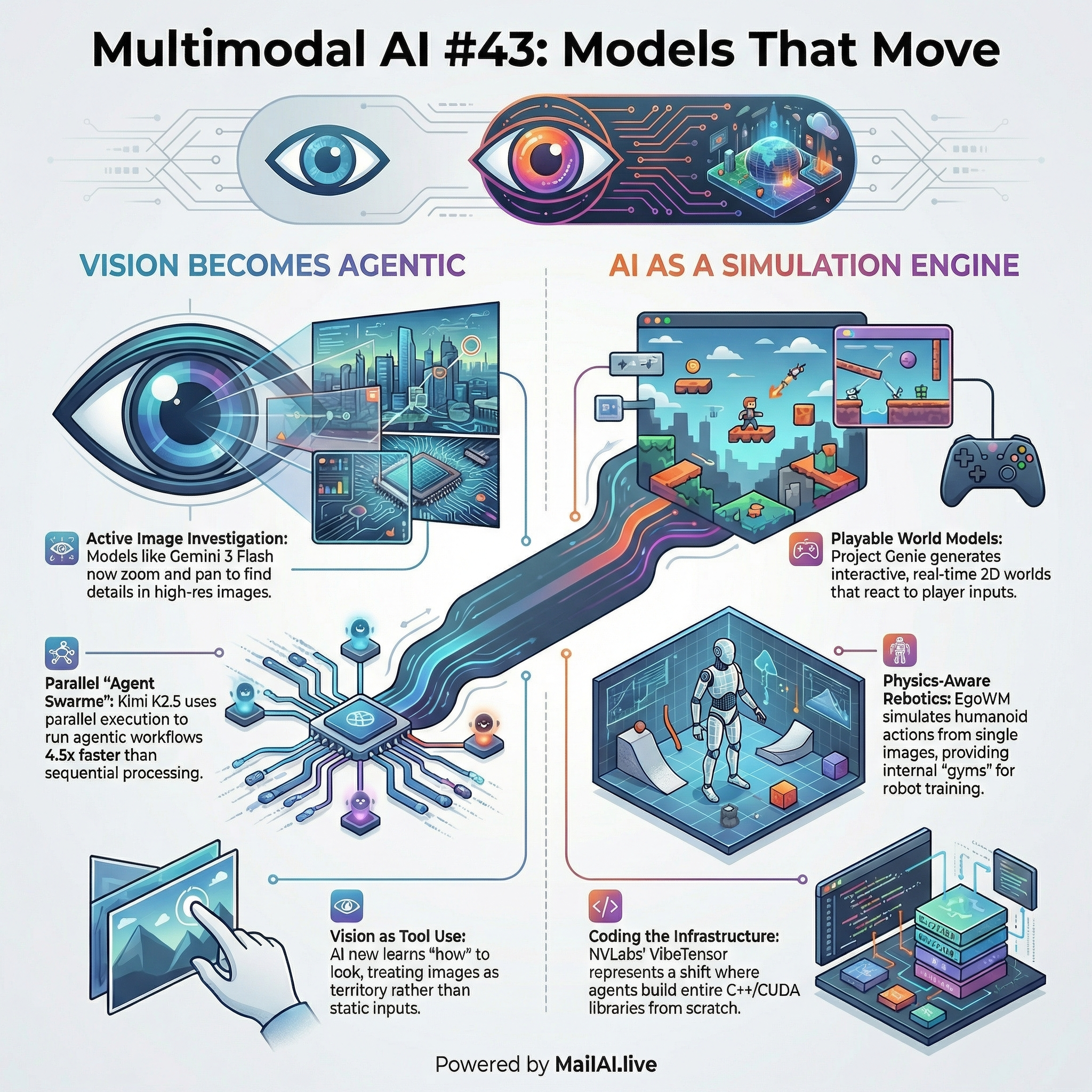

Last Week In Multimodal AI#43: Models That Move

Your Weekly Multimodal AI Roundup (Jan 26 - Feb 1)

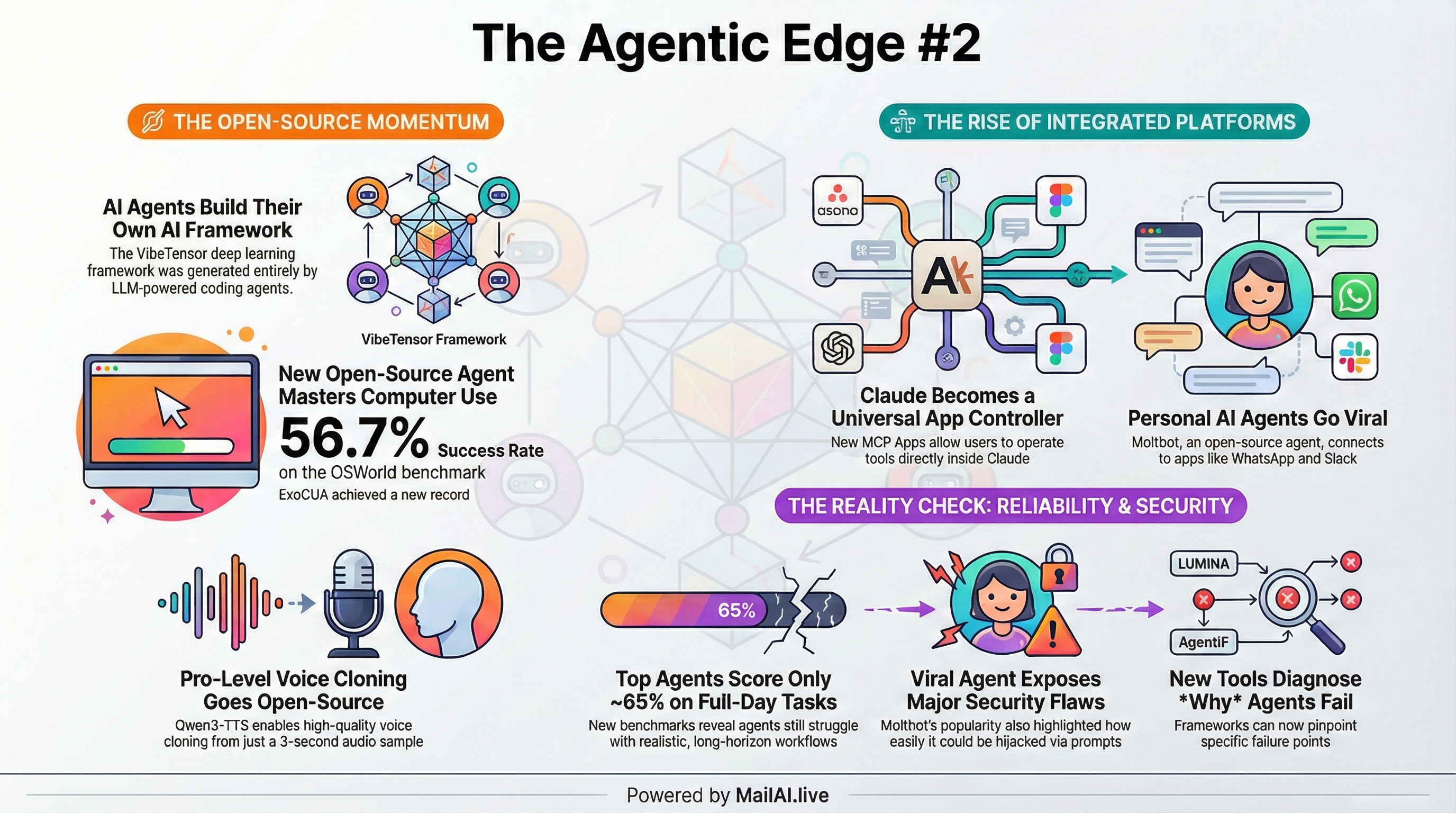

Last Week In AI Agents #2: Power Without Guardrails

Your weekly dose of agentic intelligence (Jan 22–29)

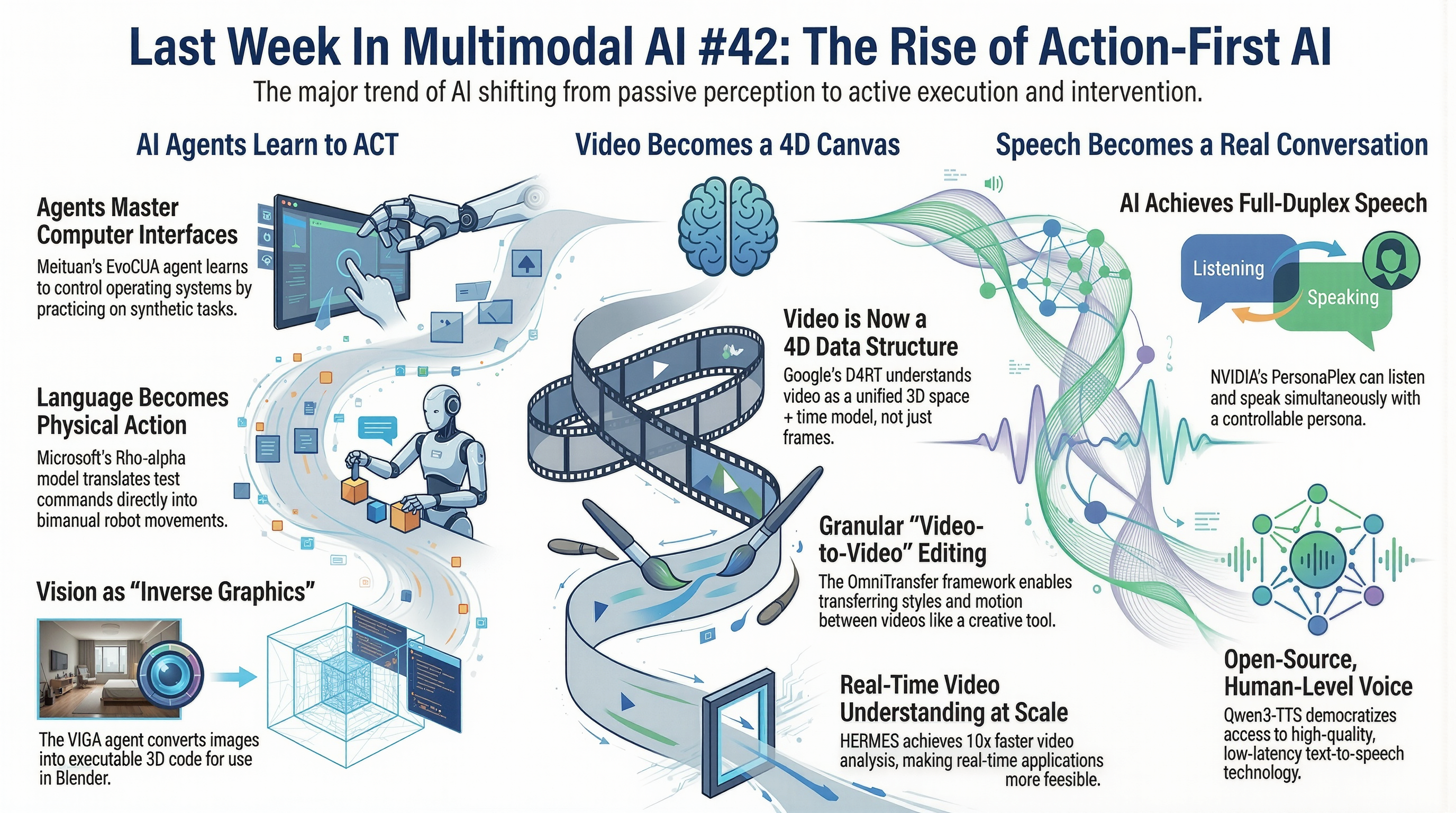

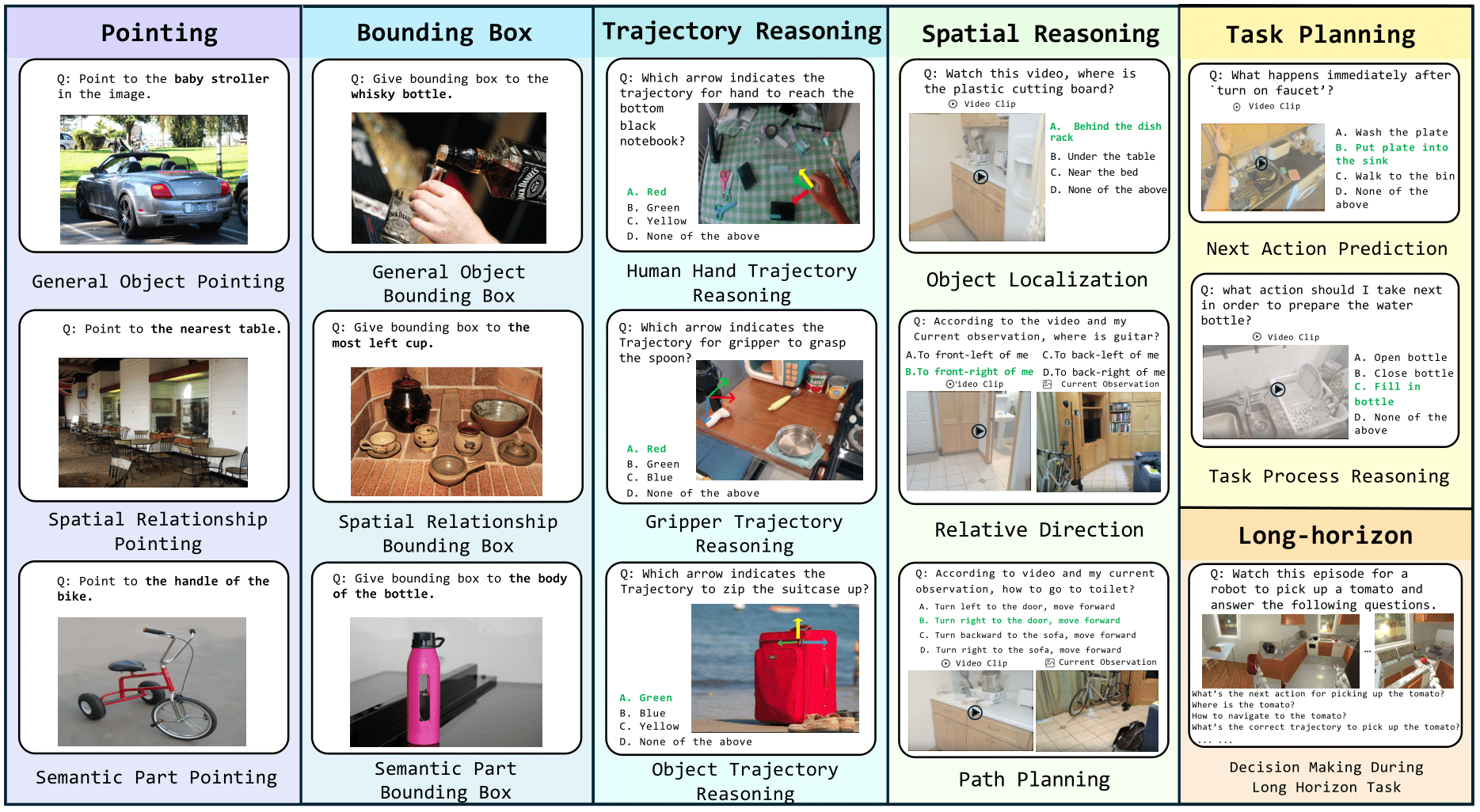

Last Week In Multimodal AI #42: The Rise of Action-First AI

Your Weekly Multimodal AI Roundup (Jan 19 - Jan 25)

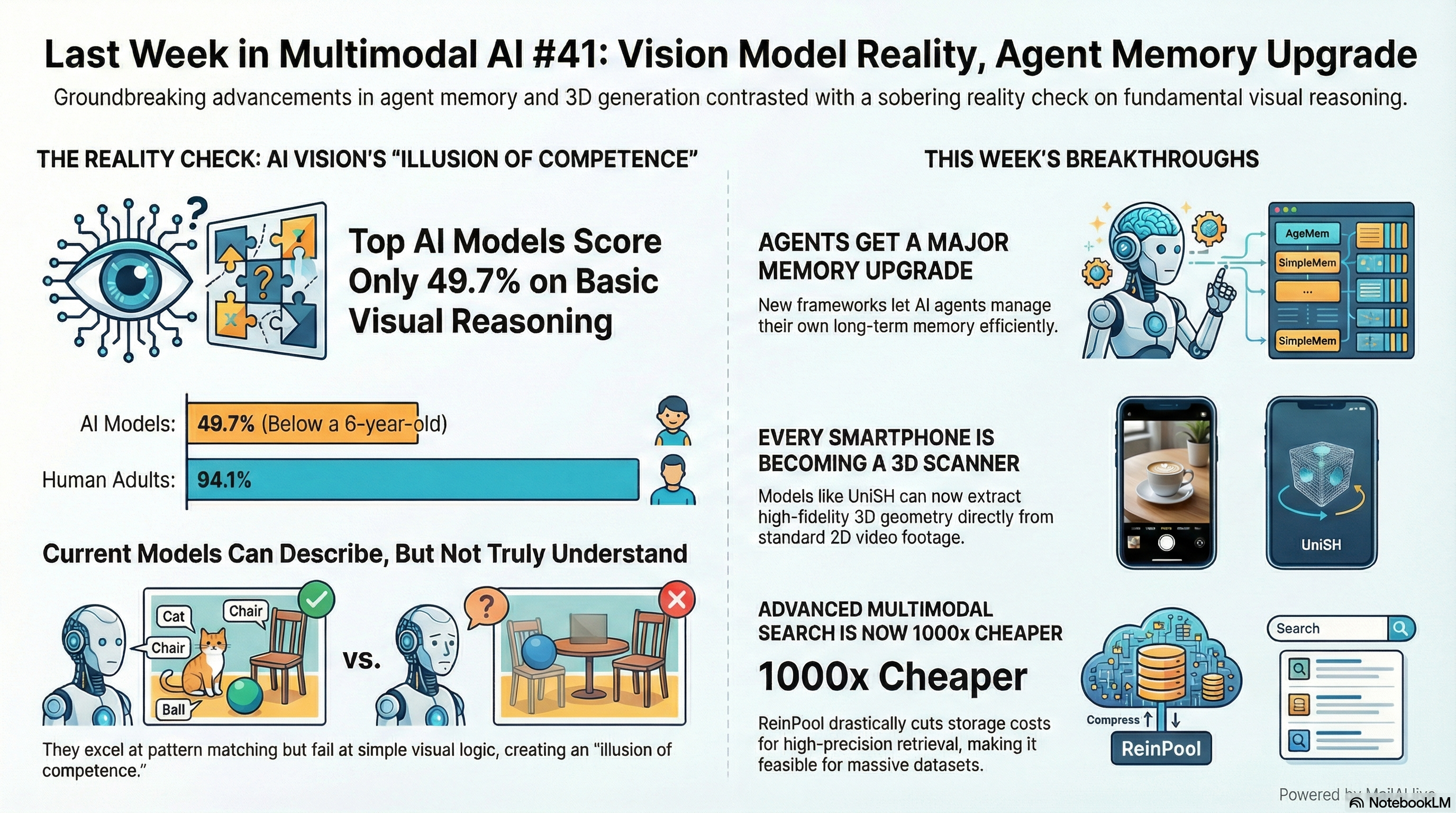

Last Week In Multimodal AI #41: Vision Model Reality, Agent Memory Upgrade

Your Weekly Multimodal AI Roundup (Jan 12 - Jan 18)

Last Week In Multimodal AI #40: Search Across Everything

Your Weekly Multimodal AI Roundup - [Week of Jan 5, 2025 - Jan 11, 2026]

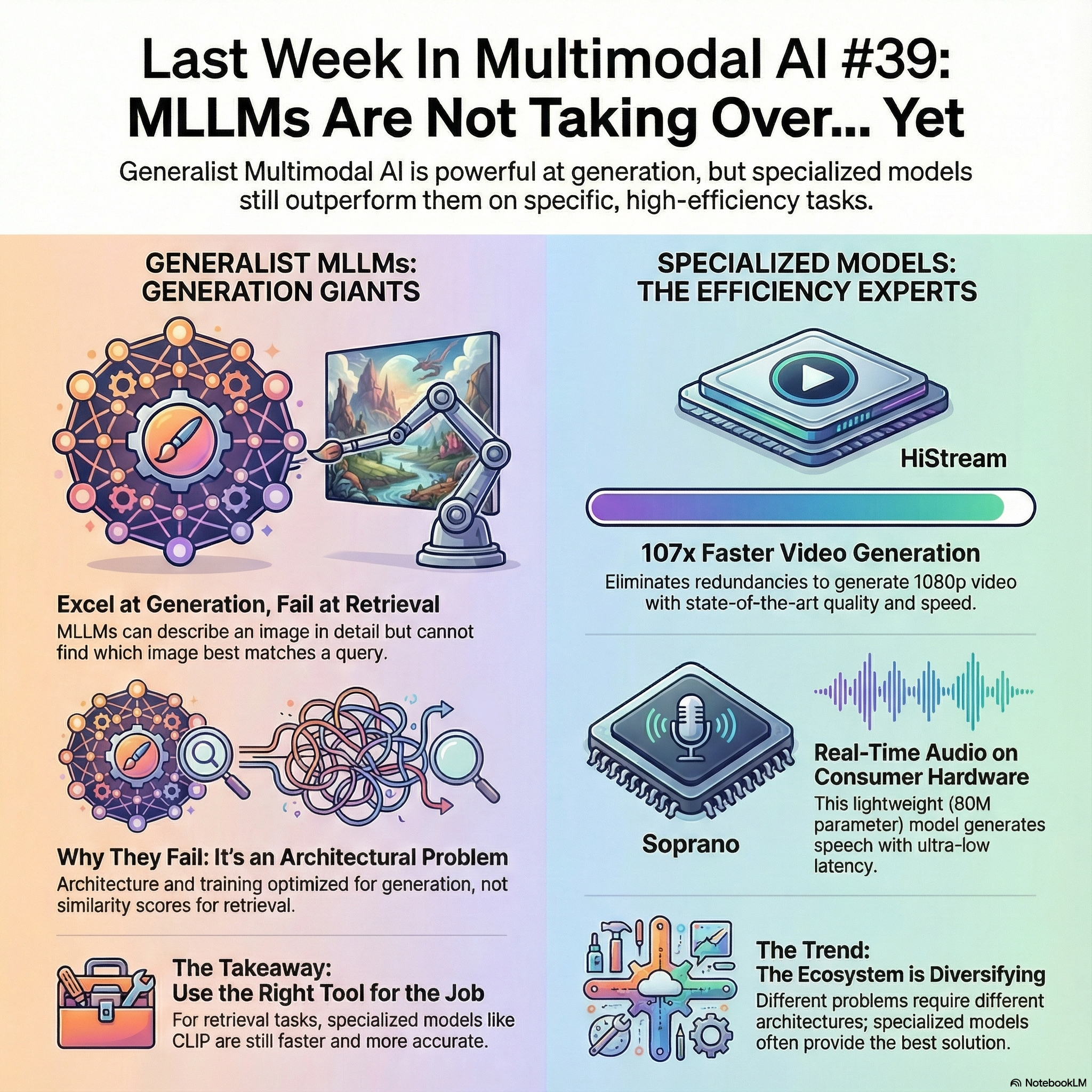

Last Week In Multimodal AI #39: MLLMs Are Not Taking Over... Yet

Your Weekly Multimodal AI Roundup - Dec 22, 2025 - Jan 4, 2026

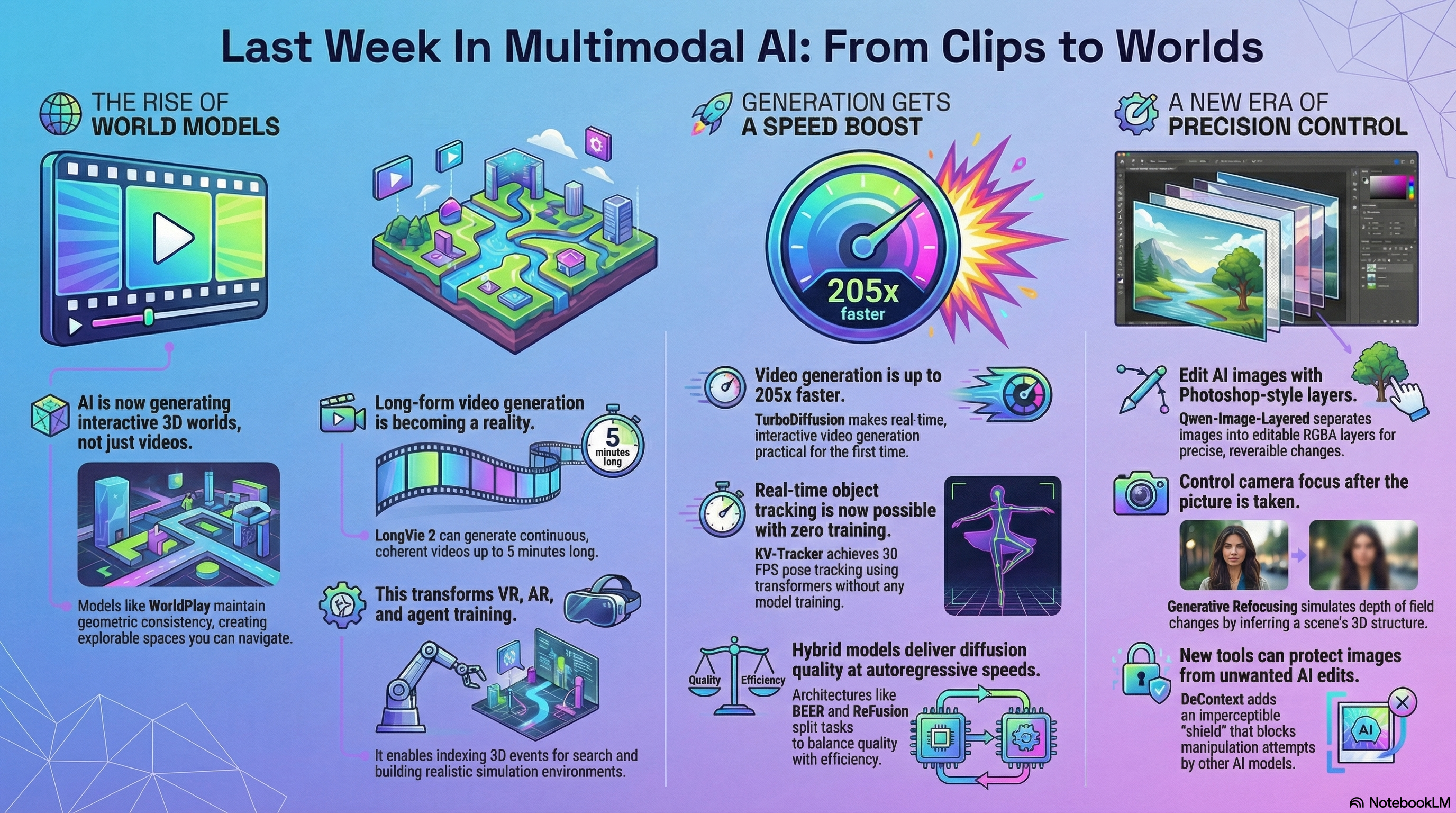

Last Week In Multimodal AI #38: From Clips to Worlds

Your Weekly Multimodal AI Roundup - Dec 15 - Dec 21, 2025

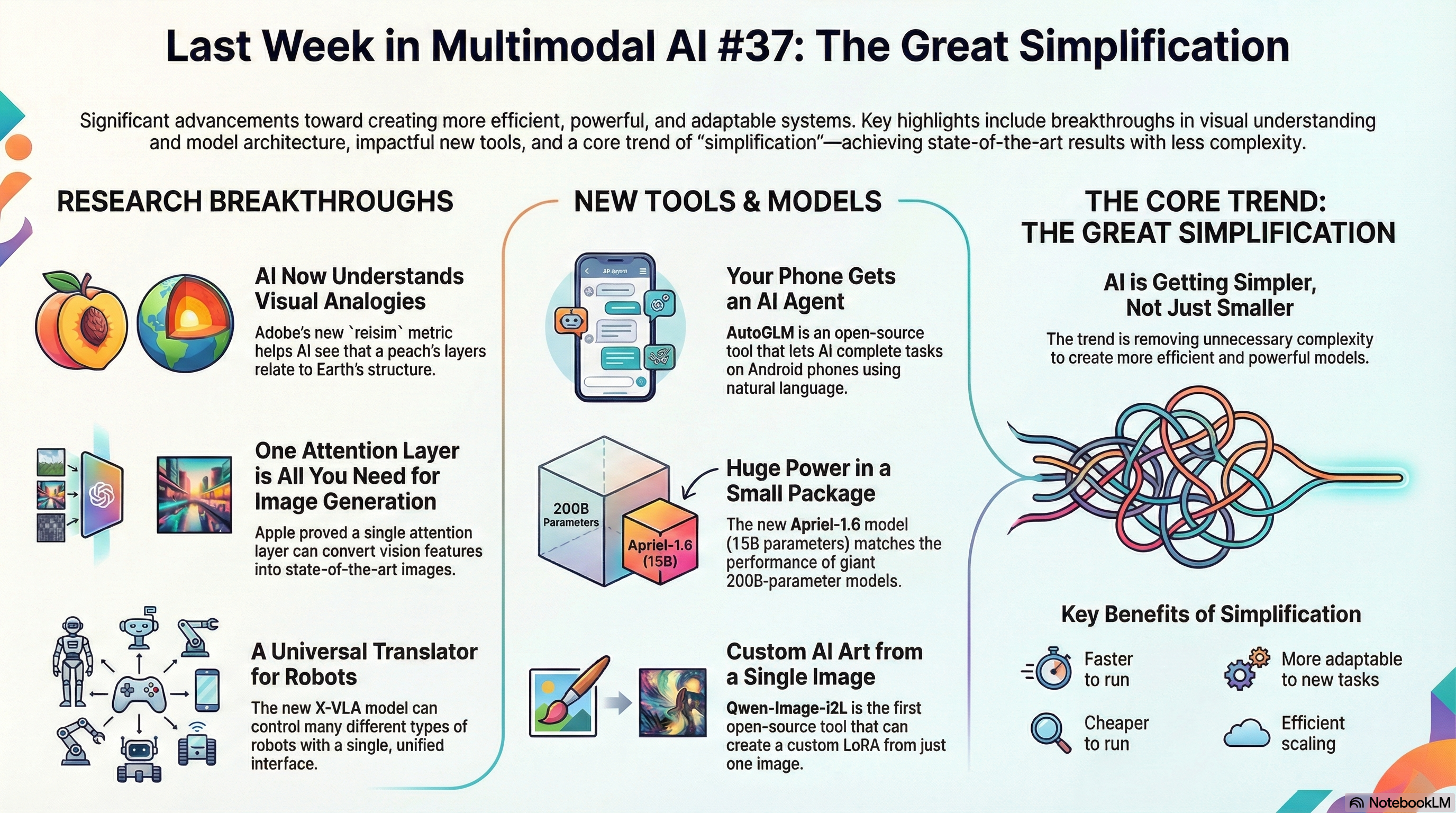

Last Week in Multimodal AI #37: The Great Simplification

Week of Dec 9-14, 2025: Apple proves one attention layer beats dozens in diffusion models, MokA shows low-rank adaptation outperforms full fine-tuning, Adobe's relsim captures analogical relationships between images, and X-VLA controls different robot types with one transformer.

Last Week in Multimodal AI #36: Factual Recall, Real-Time Video

Your Weekly Multimodal AI Roundup - Dec 1 - Dec 7, 2025

Last Week in Multimodal AI #35: Small Models, Modular Vision

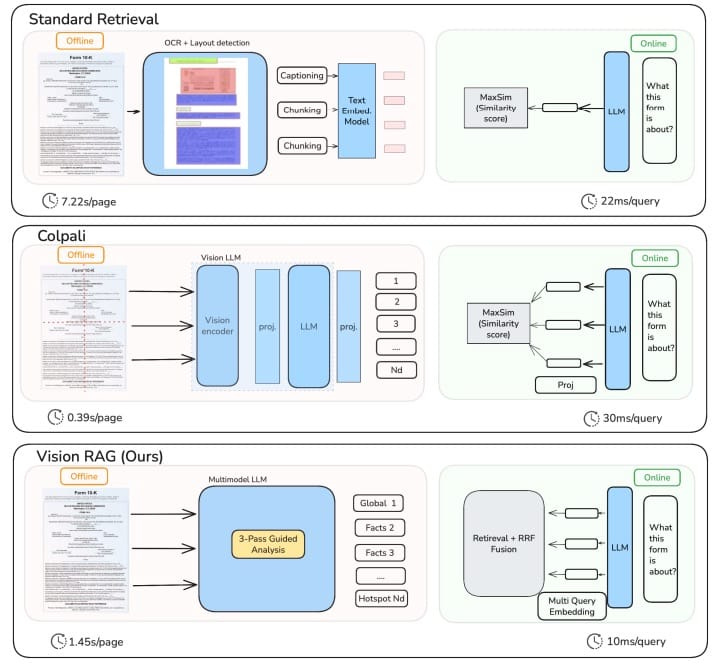

Week of Nov 24-30, 2025: Alibaba's 6B Z-Image impresses, Tencent's 1B HunyuanOCR beats larger models and APIs, VisionRAG uses 6-9x less memory than ColPali, and RynnVLA-002 boosts real-world robot success by 50%.

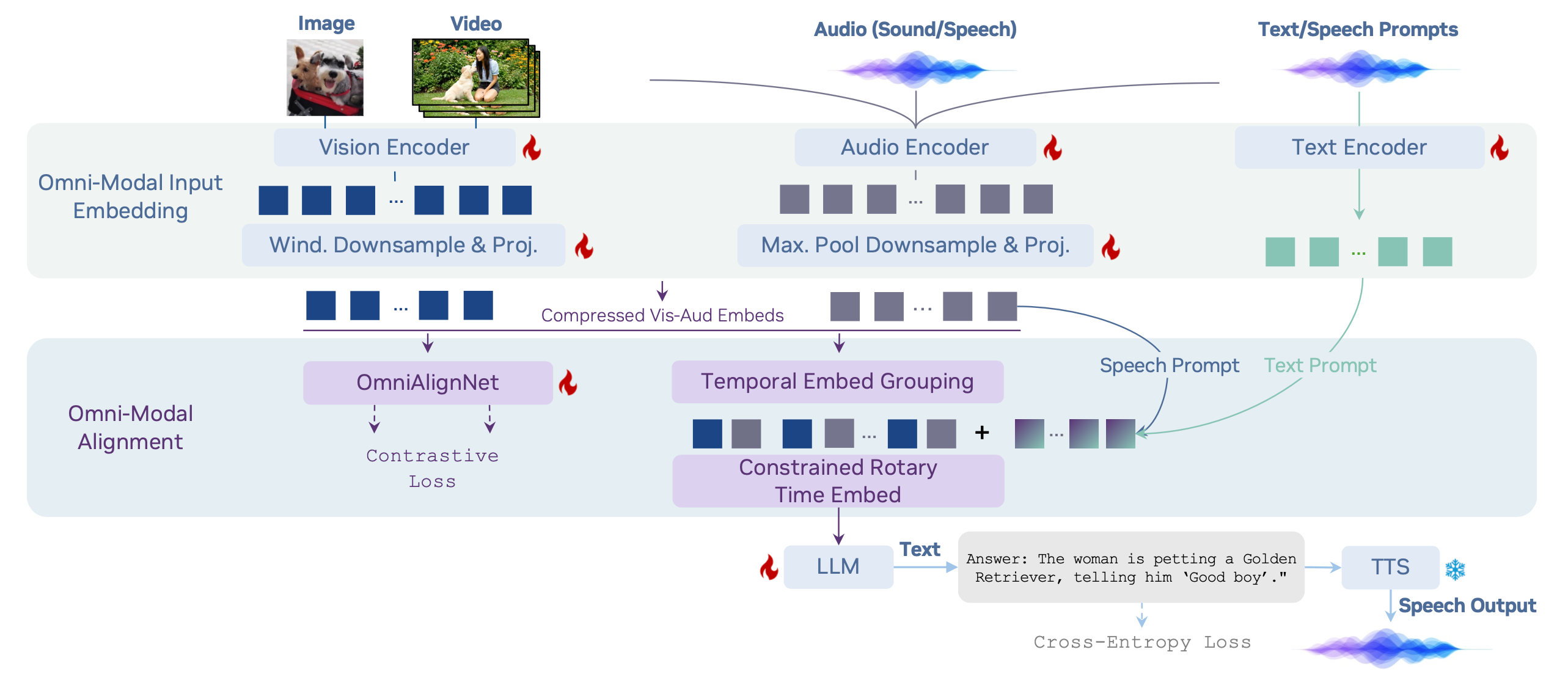

Multimodal Monday 33: Physical AI, Human Vision

Week of November 10 - November 16, 2025: Pelican-VL gives humanoid robots spatial intelligence, DeepMind teaches AI to see like humans, Marble creates 3D worlds from single images, and Meta opens speech recognition to 1,600+ languages.

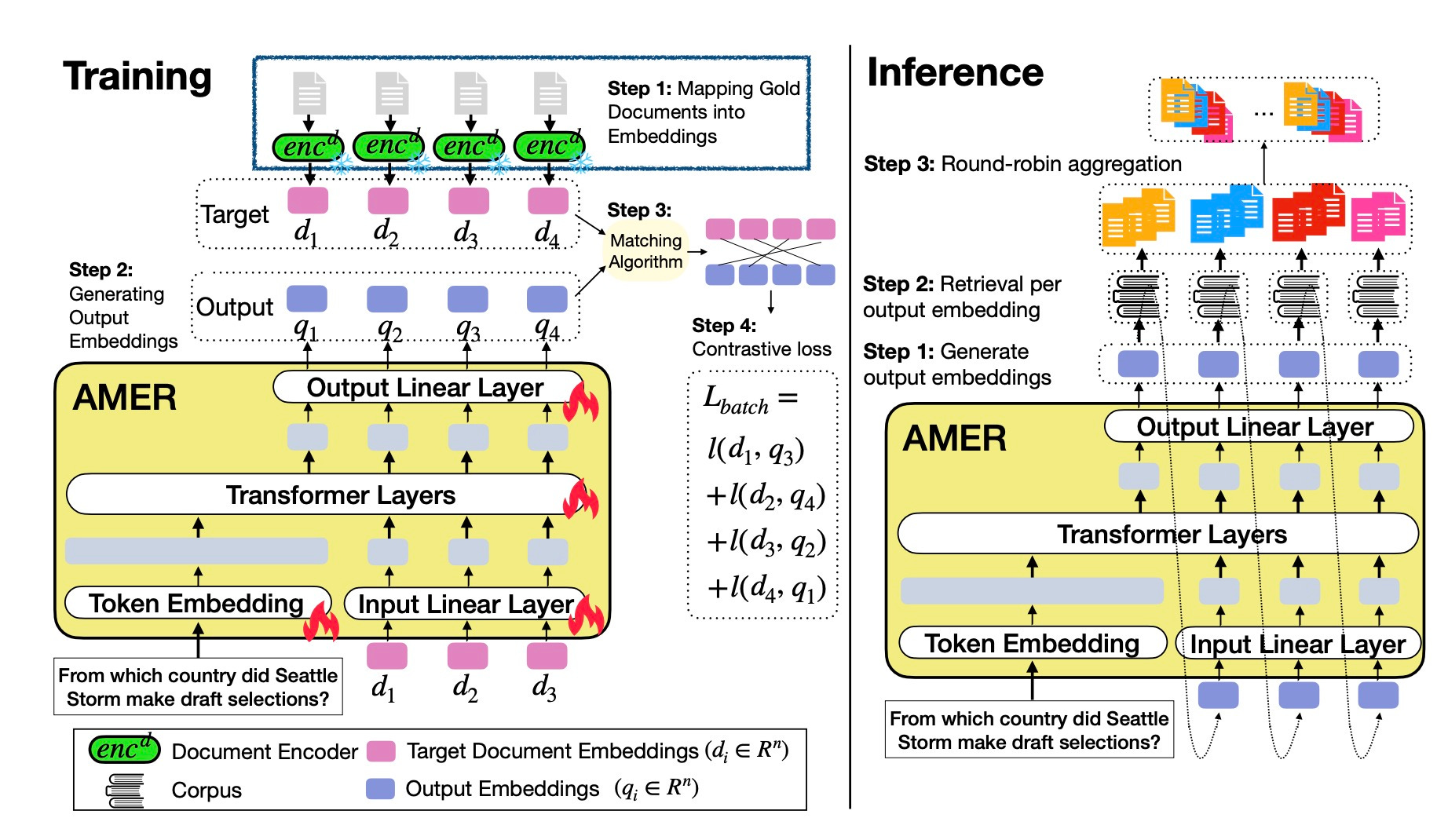

Multimodal Monday 32: Multi-Query Retrieval, Streaming Video

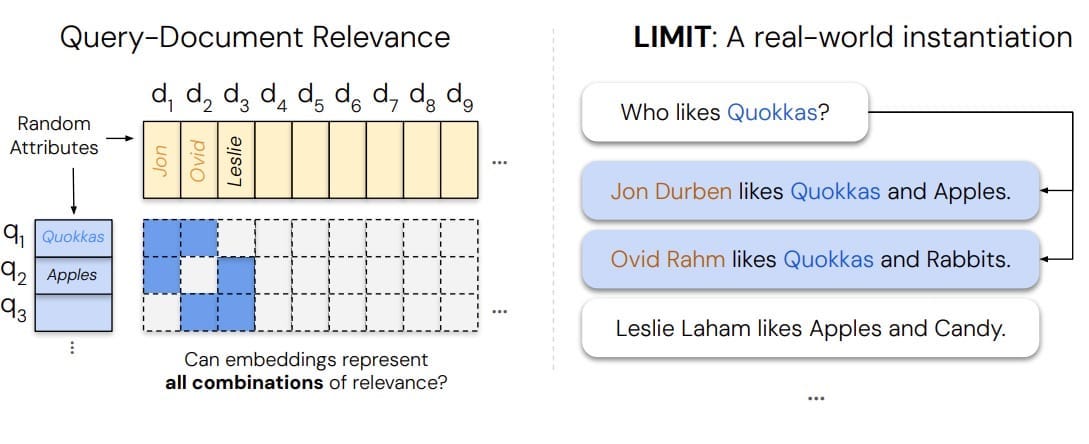

Week of November 3 - November 9, 2025: AMER shows 4-21% gains on complex queries by generating multiple embeddings, Adobe MotionStream hits 29 fps with interactive motion controls, Step-Audio-EditX edits voice emotion and style through text prompts, and GEN-0 trains robots for general skills.

Multimodal Monday #31: Visual Thinking, Longer Video

Google Latent Sketchpad lets models sketch thoughts before acting, Amazon Nova MME unifies search, Emu3.5 matches Google's Nano Banana locally, BEAR reveals why AI fails physical tasks.

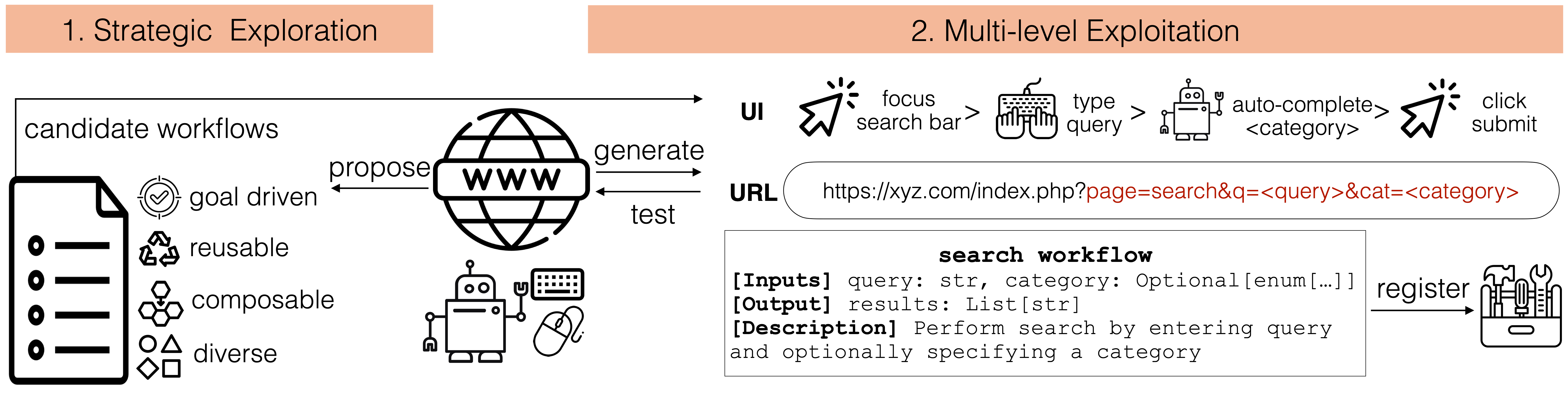

Multimodal Monday #30: Smarter Agents, Real-Time 3D

WALT and UltraCUA make websites API-smart, Seed3D 1.0 builds 3D assets from one image, DeepSeek-OCR compresses docs 10x with 97% accuracy via optical mapping and AGILE lifts VLM accuracy from 9.5% to 82.8% with interactive puzzles.

Multimodal Monday #29: Sampling Smarts, Composable Control

Week of October 13-19, 2025

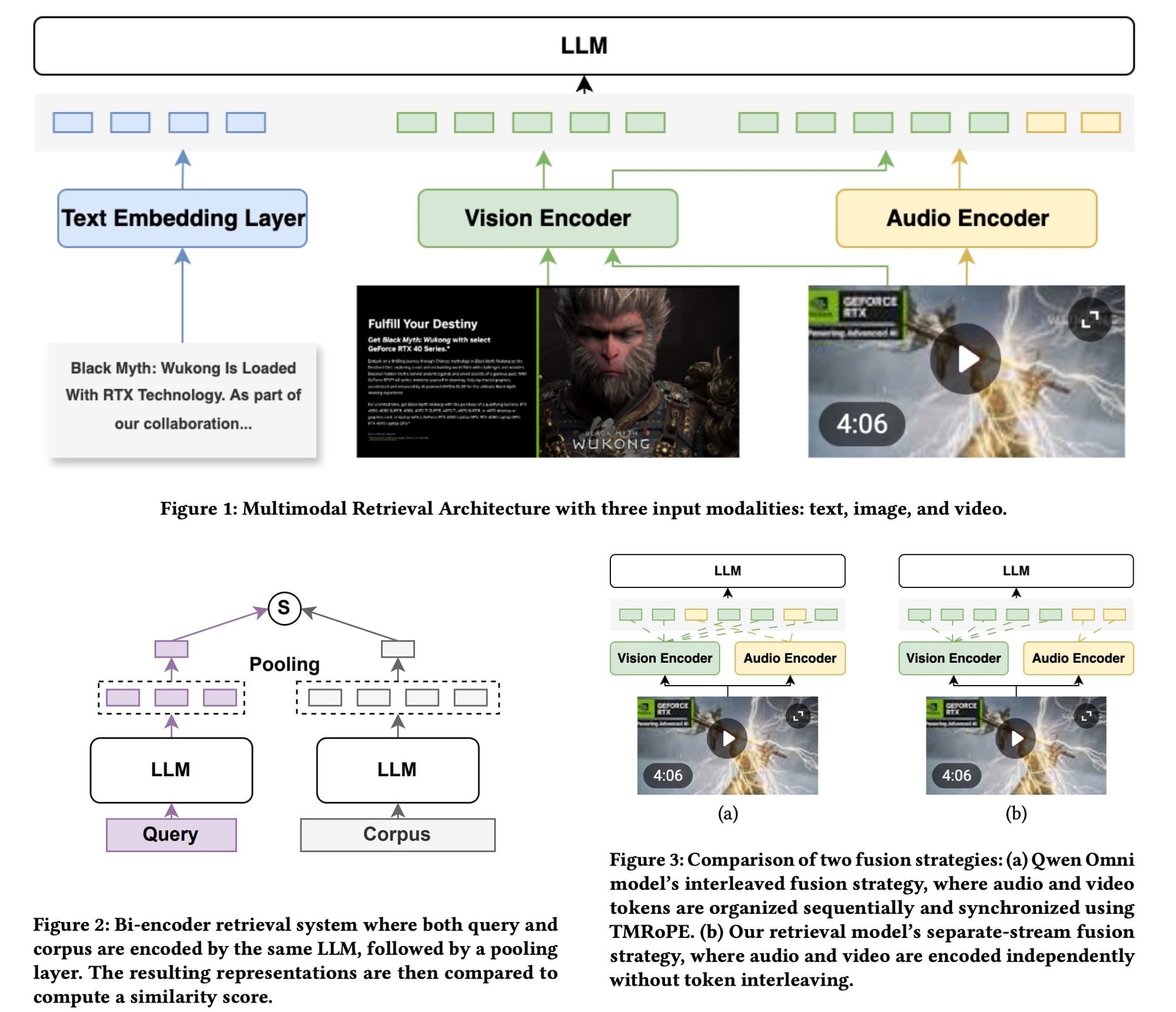

Multimodal Monday #28: Diffusion Thinks, Retrieval Unifies

Multimodal Monday #28: Fast-dLLM v2 diffuses text 2.5x faster, Omni-Embed-Nemotron hunts across modalities, and Think-Then-Embed reasons to top MMEB-V2.

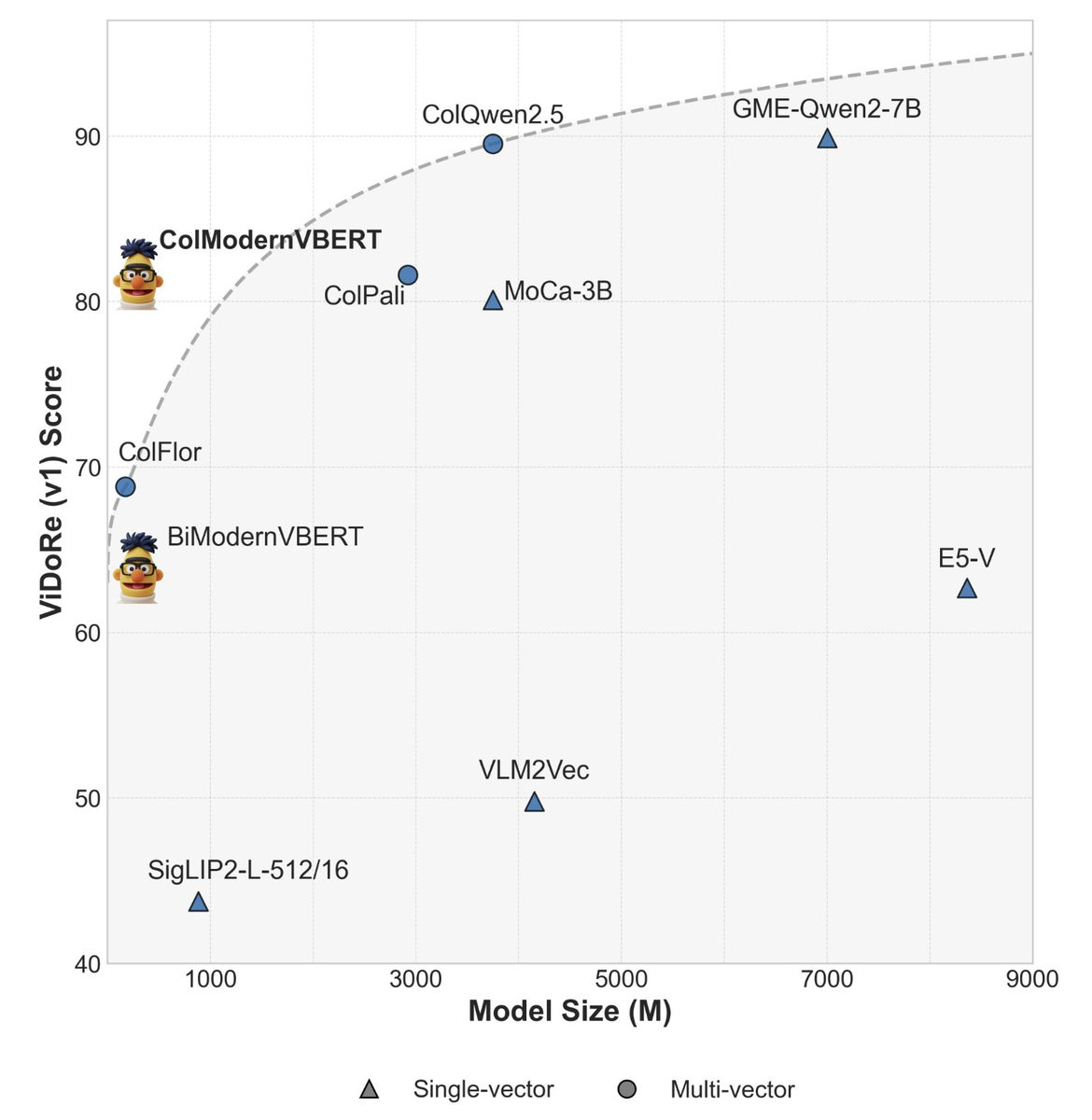

Multimodal Monday #27: Small Models Beat Giants

Multimodal Monday #27: ModernVBERT's 250M beats 10x larger, DocPruner slashes storage 60%, and Claude Sonnet 4.5 codes 30+ hours. Scale reimagined!

Multimodal Monday #26: Adaptive Retrieval, Visual Reasoning

Multimodal Monday #26: MetaEmbed scales retrieval on-the-fly, EmbeddingGemma beats giants with 308M params, and Veo3 develops reasoning.

Multimodal Monday #25: Mind Reading Meets Model Efficiency

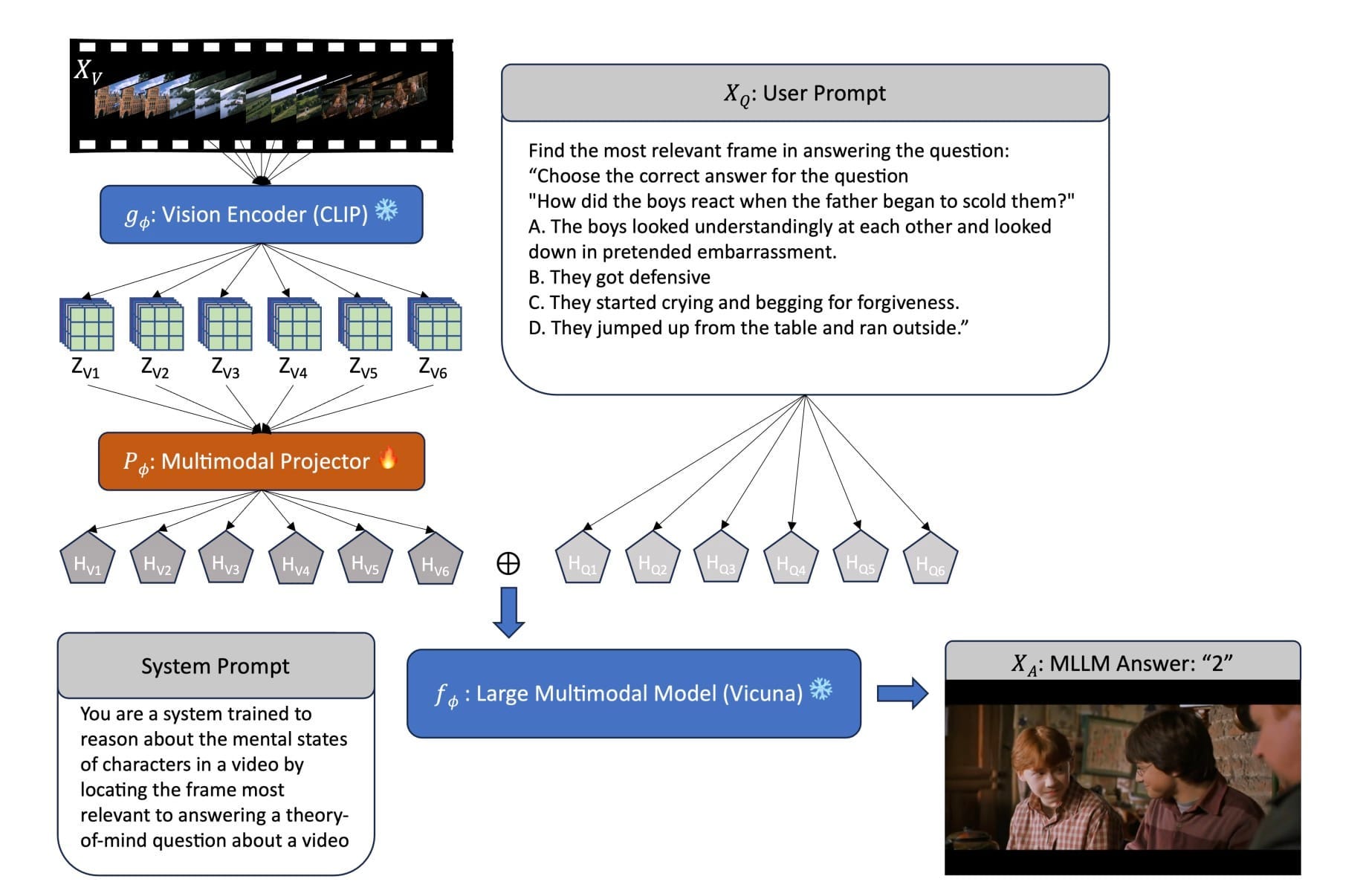

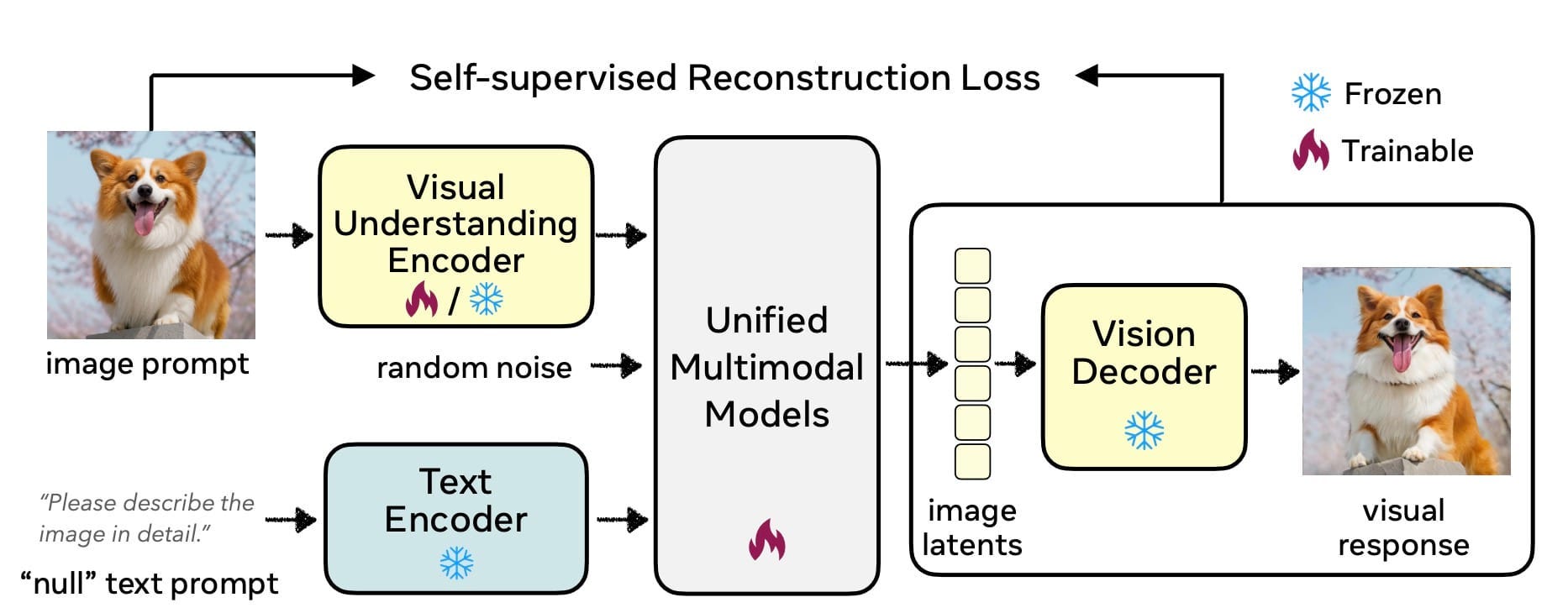

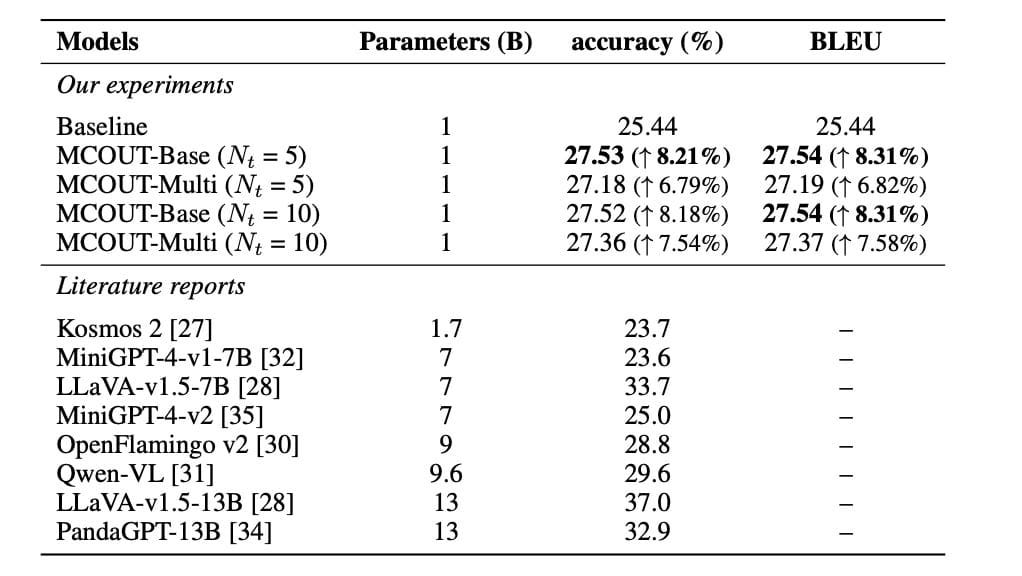

AI reads intentions in video, Moondream delivers frontier performance at 2B params, Alibaba open-source matches OpenAI. Understanding "why" changes everything!

Multimodal Monday #24: Post-Training Prevails, Neural Rendering Rises

RecA boosts quality 17% with 27 GPU-hours, RenderFormer replaces graphics pipelines with transformers, and Lucy-14B delivers instant video. Alignment beats retraining!

Multimodal Monday #23: Efficiency Evolves, Agentic Advance

This week in Multimodal AI - August 25 - September 7, 2025

Multimodal Monday #22: Spatial Crisis, Trust Bottleneck

Week of August 18-24, 2025

Multimodal Monday #21: Multimodal Reality, Expert Breakthrough

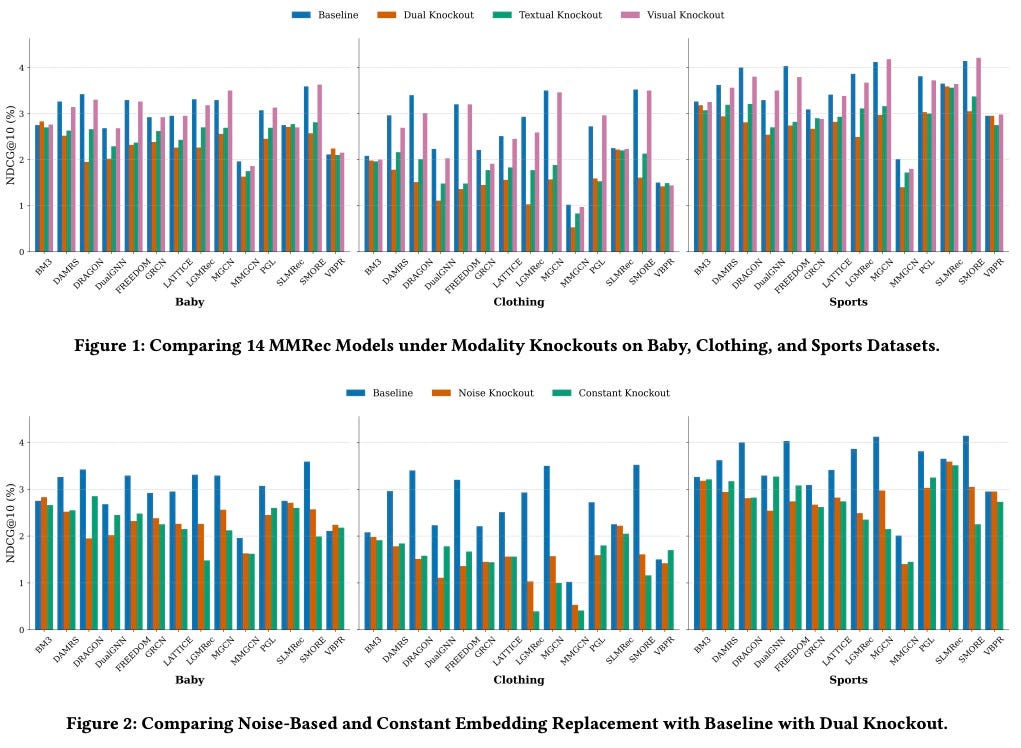

Multimodal Monday #21: Text crushes visuals in recommendations, GPT-5 beats doctors by 24-29%, and Spotify's AI evaluates podcasts. AI surpasses human limits!

Multimodal Monday #20: Multimodal Myths, Generative Frontiers

Multimodal Monday #20: Study challenges multimodal hype, Genie 3 builds 3D from text, and TURA blends real-time data. The future demands targeted deployment!

Multimodal Monday #19: Chinese AI Surge, Open Source Wins

Multimodal Monday #19: Wan 2.2 rolls out with a week of daily feature releases, HairCUP refines 3D avatars, and E-FineR boosts recognition. Open source Chinese AI surges ahead!