Tencent HY-Motion 1.0

Tencent released a billion-parameter text-to-motion model using Diffusion Transformer architecture and flow matching. The model generates 3D character animations from text prompts. The Living Edge is a reader-supported publication. To receive new posts and support my work, consider becoming a free or paid subscriber.

Diffusion Knows Transparency (DKT)

DKT repurposes video diffusion models for transparent object depth and normal estimation. It achieves zero-shot SOTA on ClearPose/DREDS benchmarks, runs at 0.17s per frame, and maintains temporal consistency across videos.

LongVideoAgent

A multi-agent framework where a master LLM coordinates a grounding agent for segment localization and a vision agent for observation extraction. The system uses reinforcement learning to optimize multi-agent cooperation with step limits.

Qwen-Image-2512

Qwen’s new text-to-image model delivers more realistic humans, finer natural textures, and stronger text rendering. It sets a new SOTA benchmark for image generation quality.

Yume-1.5

A text-controlled interactive world generation model that creates explorable 3D environments. Users can navigate and interact with generated spaces in real time.

TwinFlow

Enables one-step generation on large models using self-adversarial flows. The approach eliminates iterative sampling while maintaining output quality.

Stable Video Infinite 2.0 Pro

The new version launched with immediate ComfyUI wrapper support from Kijai. Models are already available for download and integration.

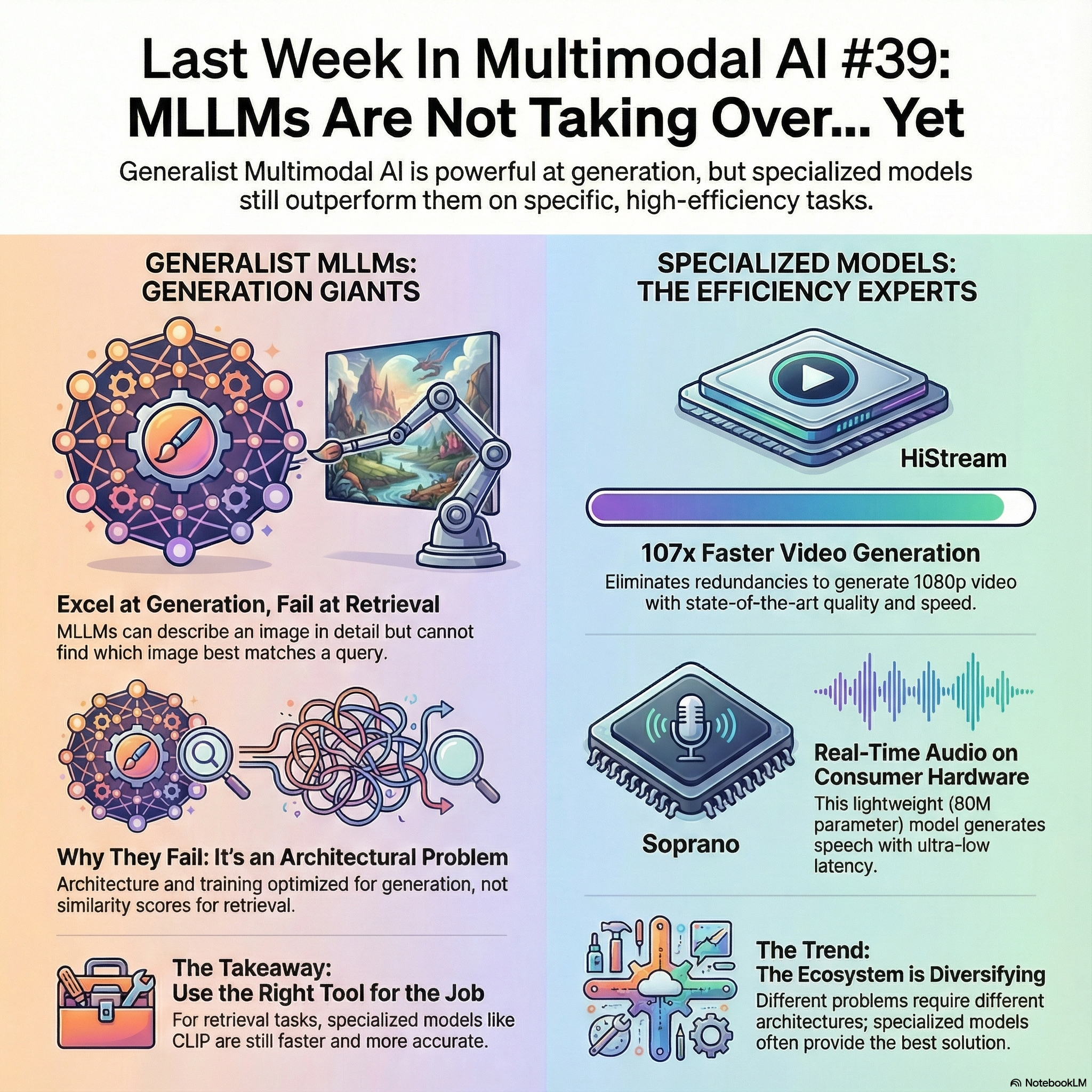

Soprano

An ultra-lightweight TTS model that generates 10 hours of 32kHz audio in under 20 seconds. It streams with sub-15ms latency using only 80M parameters and less than 1GB VRAM.

Wan-NVFP4

A fast video model claiming 28x faster render speeds than previous versions. Released by lightx2v on Hugging Face.

JavisGPT

A unified multi-modal LLM for sounding-video comprehension and generation. The model handles video analysis and audio-visual synthesis in one framework.

Dream-VL & Dream-VLA

Open vision-language and vision-language-action models using a diffusion language model backbone. Both models integrate visual understanding with either language or robotic action outputs.

HyperCLOVA X SEED Omni 8B

A unified multimodal model handling text, vision, audio, and video inputs with text, image, and audio outputs.

AMD ROCm

AMD published a guide for accelerating multimodal inference in vLLM using batch-level dynamic programming switches.

Generative Giants, Retrieval Weaklings: Why do Multimodal Large Language Models Fail at Multimodal Retrieval?

MLLMs like Qwen2-VL and Paligemma2 fail at zero-shot multimodal retrieval despite excelling at generation. Using sparse autoencoders, researchers identified three limitations: training objectives optimize for generation not retrieval, evaluation focuses on generative tasks, and autoregressive architectures compute poor similarity scores.

ReaSeq: Unleashing World Knowledge via Reasoning for Sequential Modeling

ReaSeq solves two problems in recommender systems: knowledge poverty in ID-based representations and systemic blindness to off-platform user interests. The framework uses reasoning to incorporate world knowledge into sequential modeling.

MegaRAG: Multimodal Knowledge Graph-Based Retrieval Augmented Generation

MegaRAG automatically constructs multimodal knowledge graphs integrating text, visual, and spatial information from documents. It uses a two-round, page-based approach where LLMs extract entities in parallel then refine the graph by retrieving relevant subgraphs.

Retrieval-augmented Prompt Learning for Pre-trained Foundation Models

This framework enhances prompt learning by decoupling knowledge from memorization. The approach addresses instability in low-resource settings where parametric models overfit to shallow patterns.

Latent Implicit Visual Reasoning

LIVR discovers visual reasoning tokens without explicit supervision. The method outperforms approaches requiring costly annotations.

Geometric Memory in Sequence Models

Google DeepMind identified geometric memory in deep sequence models, where embeddings encode global relationships between all entities including those never co-occurring in training. This contrasts with associative memory’s brute-force lookup approach.

Step-DeepResearch

A 32B parameter research agent matching OpenAI and Gemini DeepResearch through atomic capability training. It decomposes research into planning, information gathering, verification, and writing, achieving 61.42 on ResearchRubrics.

SpatialTree

A 4-level cognitive hierarchy mapping spatial abilities in MLLMs from perception to agentic competence. Benchmarks 27 sub-abilities across 16 models and reveals transfer patterns.

FlowBlending

Stage-aware multi-model sampling for fast and high-fidelity video generation. The approach optimizes different models for different stages of the generation process.

SpaceTimePilot

Adobe’s video diffusion model disentangles space and time for controllable rendering. From a single input video, it enables independent control of camera viewpoint and motion for bullet-time, slow motion, and mixed trajectories.

HiStream

Meta’s autoregressive framework for 1080p video generation eliminates spatial, temporal, and timestep redundancy. HiStream achieves SOTA quality with up to 107.5x speedup.

InsertAnywhere

Bridges 4D scene geometry and diffusion models for realistic video object insertion. The method maintains spatial and temporal consistency across frames.

Robust-R1

A framework making multimodal models robust to visual degradations through explicit degradation-aware reasoning chains. Achieves SOTA robustness on R-Bench while maintaining interpretability.

StoryMem

ByteDance’s multi-shot long video storytelling framework with memory. The system maintains narrative consistency across extended video sequences.

Spatia

Microsoft’s video generation system maintains a 3D scene point cloud as persistent spatial memory. Enables long-horizon, spatially consistent video generation with explicit camera control and 3D-aware editing.

DiffThinker

Enables generative multimodal reasoning with diffusion models. The approach integrates reasoning capabilities directly into the diffusion generation process.

ComfyUI Segmentation Agent

Adam Barbato released an LLM-based character segmentation agent for ComfyUI using SAM 3.

CosyVoice 3 ComfyUI

Machine Delusion released a voice cloning node pack featuring CosyVoice 3 for ComfyUI.

SAM3 Video Tracking in X-AnyLabeling

Important_Priority76 integrated SAM3 video object tracking into X-AnyLabeling for easy annotation workflows.

AI Reskinning

Ege Berkina demonstrated how AI reskinning barriers have nearly disappeared with current tools.