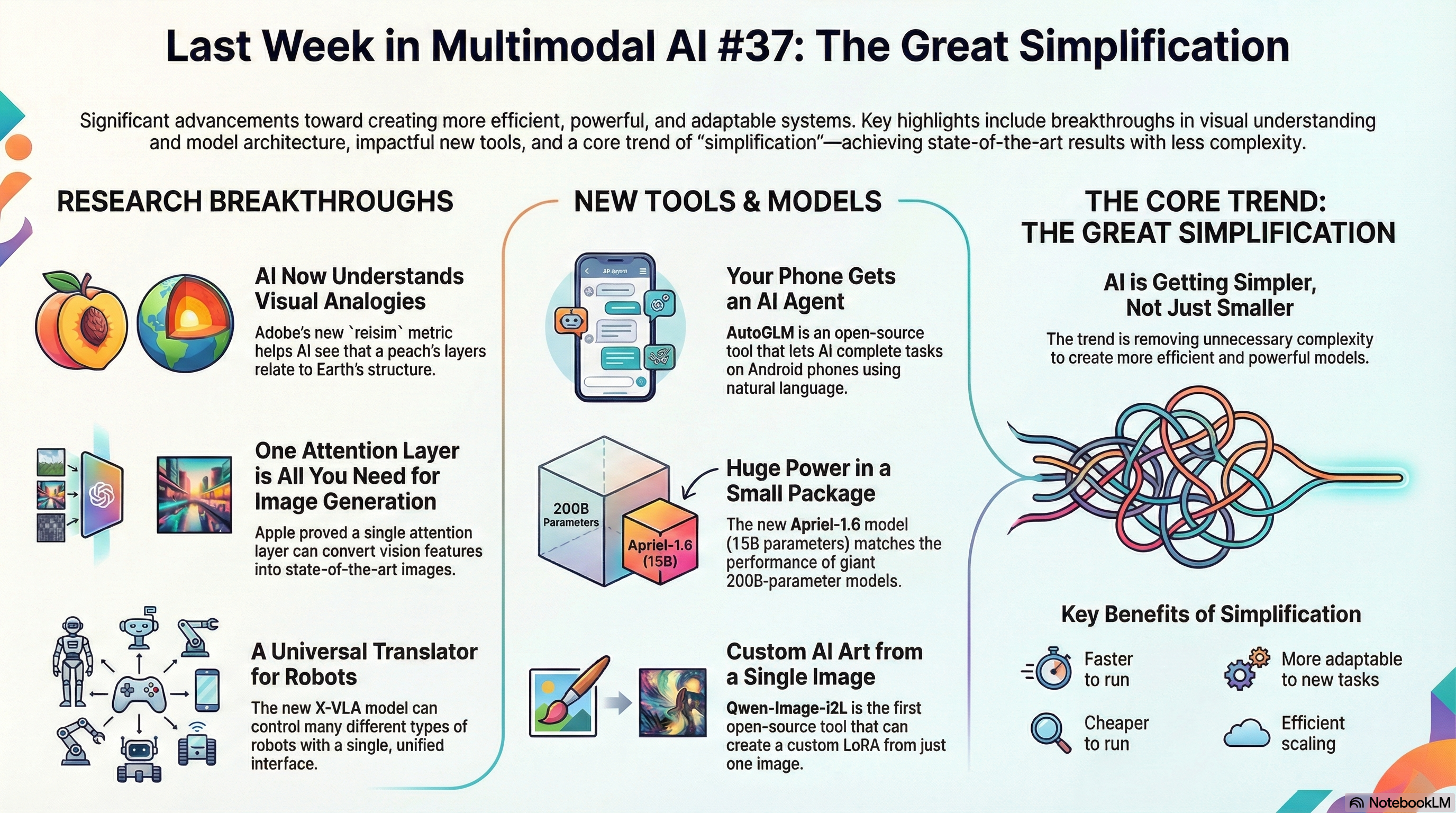

Relational Visual Similarity

Adobe Research and UW-Madison developed relsim, a metric that captures analogical relationships between images rather than surface-level features. The model understands that a peach’s layers relate to Earth’s structure the same way a key relates to a lock.

One Attention Layer is Enough

Apple demonstrates that a single attention layer transforms pretrained vision features into state-of-the-art image generators. This approach simplifies diffusion models while maintaining top-tier quality.

Unison: A Fully Automatic, Task-Universal, and Low-Cost Multimodal Framework

Unison automates multimodal tasks across text, images, and video without task-specific retraining. The framework uses efficient fusion techniques that work across different modalities with minimal computational overhead.

MokA: Multimodal Low-Rank Adaptation for MLLMs

MokA reveals that current multimodal fine-tuning wastes parameters and proposes a low-rank method that improves visual-language integration. The approach beats standard fine-tuning on visual grounding benchmarks while using fewer parameters.

Computational Emotion Analysis with Multimodal LLMs

Researchers evaluate how well multimodal LLMs analyze emotions from audio-visual data. The paper identifies gaps in current methods and shows where generative AI can improve affective computing.

AutoGLM

z.ai released AutoGLM, an open-source framework that completes tasks on Android phones through natural language commands. AutoGLM-Phone-9B is available on Hugging Face and ModelScope.

Apriel-1.6-15B-Thinker

ServiceNow’s 15B multimodal model scores 57 on the Artificial Analysis Intelligence Index, matching the performance of 200B-scale models. The model handles reasoning tasks at a fraction of the size.

GLM-4.6V

Z.ai released GLM-4.6V with tool-calling and 128K context window for vision-language tasks. The model handles multilingual development and API integration.

GPT-5.2

OpenAI released GPT-5.2, their latest frontier model. The model advances capabilities across reasoning, generation, and multimodal understanding.

DMVAE

Tencent and PKU released DMVAE, a VAE that matches latent distributions to any reference. The model achieves state-of-the-art image synthesis with fewer training epochs.

The Great Simplification

We’re watching complexity collapse in real time. Apple needs one attention layer where others use dozens. Unison handles any modality without retraining. MokA beats full fine-tuning with a fraction of the parameters. This isn’t about making things smaller. It’s about removing the cruft that never belonged there. WHAT THIS MEANS FOR YOU Your multimodal systems can run faster and cheaper. A single-layer model fits on hardware that was out of reach last year. You can index a billion images on a single GPU. Your systems can become more adaptable. Unison adds new modalities without retraining. You can build applications that were too complex before. Your models become more transparent. Low-rank adaptation means fewer parameters to debug. You can explain why your system retrieved a specific image. This is multimodal AI for teams with real constraints. Not just for companies with unlimited compute budgets.