HART

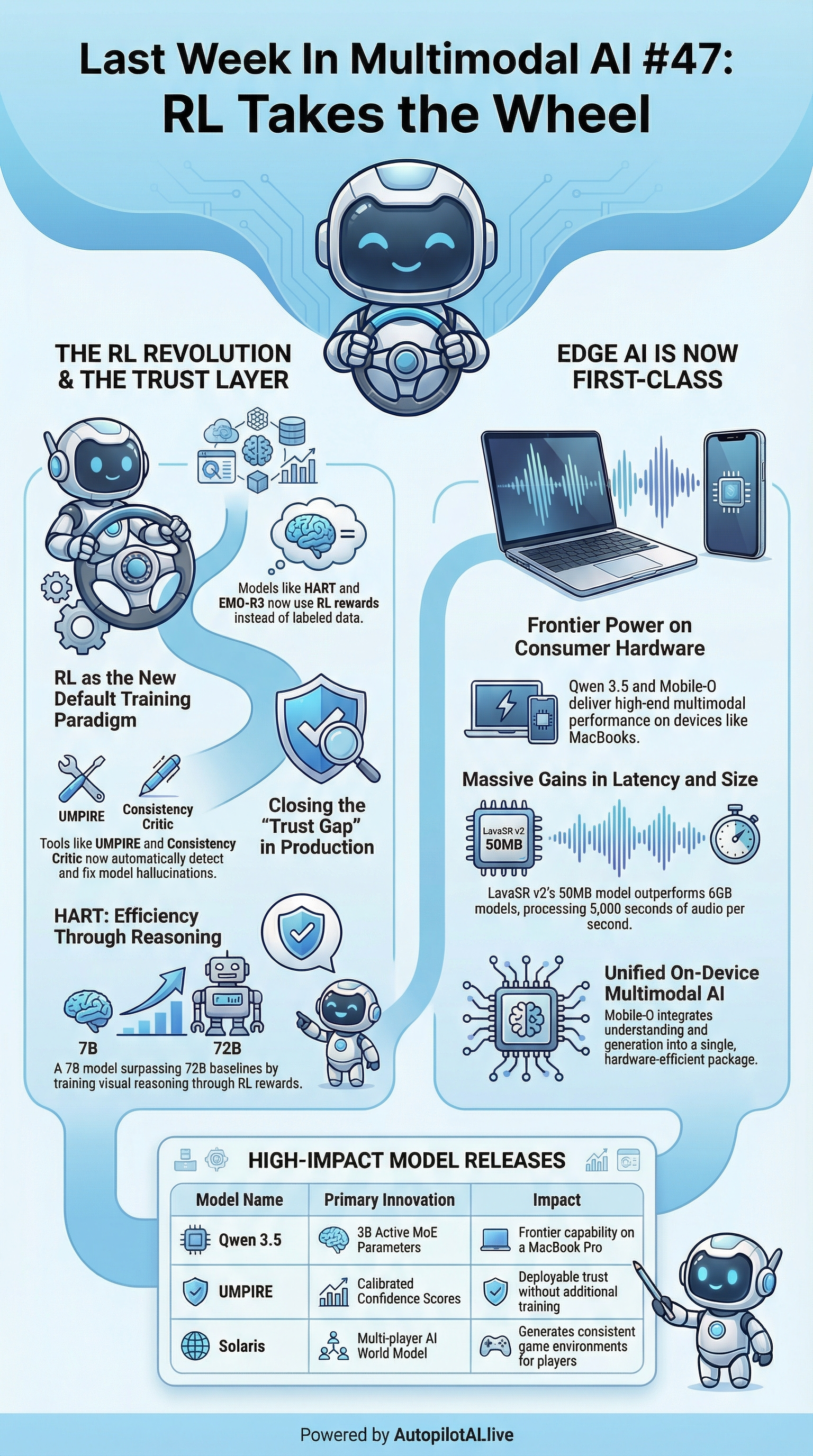

[https://arxiv.org/abs/2602.23615] — Trains visual reasoning entirely from RL rewards, no labeled data. A 7B model surpasses 72B baselines on high-resolution benchmarks. The gap between “good data” and “good reward signal” is closing fast.

EMO-R3

Optimization procedures of (a) general groundingbased methods without bounding-box annotations and (b) our proposed model. * EMO-R3 [https://arxiv.org/abs/2602.23802] (GitHub [https://github.com/xiaomi-research/emo-r3]) — Xiaomi Research adds a reflective RL loop for emotional reasoning, letting models critique and revise their own affective inferences. Standard RL methods like GRPO miss the nuance; this doesn’t.

OpenClaw-RL

Illustration of the motivation. * OpenClaw-RL [https://github.com/Gen-Verse/OpenClaw-RL] (X/Twitter [https://x.com/YinjieW2024/status/2027011510103363837?s=20]) — Host any LLM on OpenClaw-RL’s server and it continuously self-improves through RL, privately, without redeployment. Fully open-source.

Reasoning-Driven Multimodal LLMs for Domain Generalization

[https://arxiv.org/html/2602.23777v1] — Embeds explicit reasoning steps into multimodal LLMs. Result: substantially better cross-domain transfer — critical for real deployments where the test distribution never matches training.

Qwen 3.5 Medium & Small Series

Qwen 3.5 Medium & Small Series [https://github.com/QwenLM/Qwen3.5] (HuggingFace [https://huggingface.co/collections/Qwen/qwen35] | HuggingFace [https://t.co/wFMdX5pDjU]) — The 35B-A3B MoE uses only 3B active parameters and outperforms its 235B predecessor. Runs on a MacBook Pro. Natively multimodal, 201 languages, Apache 2.0.

Mobile-O

Small Series. * Mobile-O [https://arxiv.org/abs/2602.20161] (HuggingFace [https://huggingface.co/Amshaker/Mobile-O-1.5B]) — Unified multimodal understanding and generation in a single model on consumer hardware. One of the most concrete steps yet toward truly on-device multimodal AI.

LavaSR v2

Comparison of our approach with existing unified models. * LavaSR v2 [https://github.com/ysharma3501/LavaSR] (HuggingFace [https://huggingface.co/YatharthS/LavaSR]) — A 50MB model that outperforms 6GB diffusion models on audio enhancement and processes ~5,000 seconds of audio per second of compute. Immediately useful as an audio preprocessor in multimodal pipelines.

Inception Mercury 2

[https://www.inceptionlabs.ai/blog/introducing-mercury-2] — Diffusion applied to text generation itself, hitting ~1,000 tokens/sec on Blackwell. Not on-device, but changes the latency math for agentic multimodal loops entirely.

VGUBench

[https://arxiv.org/abs/2602.23711] — Does a unified model say the same thing whether it answers in text or image? This benchmark systematically asks that question and finds meaningful cross-modal inconsistencies. An important diagnostic for anyone deploying unified systems.

UMPIRE

The pipeline of VGUBench construction. * UMPIRE [https://arxiv.org/abs/2602.24195] — Attaches calibrated confidence scores to multimodal model outputs with zero additional training. Knowing when not to trust the model is now a deployable feature, not a research goal.

The Consistency Critic

[https://ouyangziheng.github.io/ImageCritic-Page/] (HuggingFace [https://huggingface.co/ziheng1234/ImageCritic] | GitHub [https://github.com/HVision-NKU/ImageCritic]) — Takes a generated image and reference, then surgically corrects inconsistencies (wrong text, attribute mismatches, continuity errors) while leaving the rest untouched. Plugs directly into production image pipelines.

Quiver’s Arrow 1.0

Quiver’s Arrow 1.0 [https://quiver.ai/] — Generates and edits actual SVG code rather than rasterized outputs, scalable, animatable, designer-editable. Targets the production workflow gap that image-generation models leave open.

Google Gemini 3.1 Flash Image

[https://gemini.google.com/] (codenamed “Nano Banana 2”) — Native multimodal image generation now embedded across Gemini chat, Search AI Mode, and Lens. Tightly integrated rather than bolted on.

Solaris

[https://solaris-wm.github.io/] (HuggingFace [https://huggingface.co/collections/nyu-visionx/solaris-models]) — The first multi-player AI world model, generating consistent game environments for simultaneous players. Ships with 12.6M frames of gameplay data and open training code.

LoRWeB

[https://research.nvidia.com/labs/par/lorweb/] (GitHub [http://github.com/NVlabs/LoRWeB] | HuggingFace [https://huggingface.co/hilamanor/lorweb]) — NVIDIA’s method for composing and interpolating across visual analogies in diffusion models. Extends expressive range without retraining.

IRPAPERS

[https://arxiv.org/abs/2602.17687] (GitHub [https://github.com/weaviate/IRPAPERS] | HuggingFace [https://huggingface.co/datasets/weaviate/irpapers-queries]) — Weaviate’s benchmark for retrieval and QA over visually complex scientific documents. Fills a real gap in evaluation infrastructure.

Large Multimodal Models as general in-context classifiers

[https://arxiv.org/abs/2602.23229] — LMMs can match or beat contrastive VLMs on classification tasks with just a few in-context examples. No fine-tuning required.

Tekinium (Nous Research)

[https://x.com/teknium/status/2027279559012577410?s=42] — Added OCR and document understanding skills to Hermes-agent, their open-source agent framework.

Prithiv Sakthi

[https://x.com/prithivMLmods/status/2027347332455698746?s=20] — Released a Qwen3-VL-Video-Grounding demo with point tracking, text-guided detection, and real-time bounding box detection across frames, powered by Qwen3-VL-4B.

Emily First

[https://x.com/iamemily2050/status/2026473288562516327?s=42] — Shared a new skill worth checking out.

Some_Smile5927 on r/StableDiffusion

[https://www.reddit.com/r/StableDiffusion/comments/1rfvx7c/wan_22s_4x_frame_interpolation_capability/?utm_source=share&utm_medium=web3x&utm_name=web3xcss&utm_term=1&utm_content=share_button] — A compelling 4x frame interpolation comparison showing the current ceiling of open-source video smoothing.