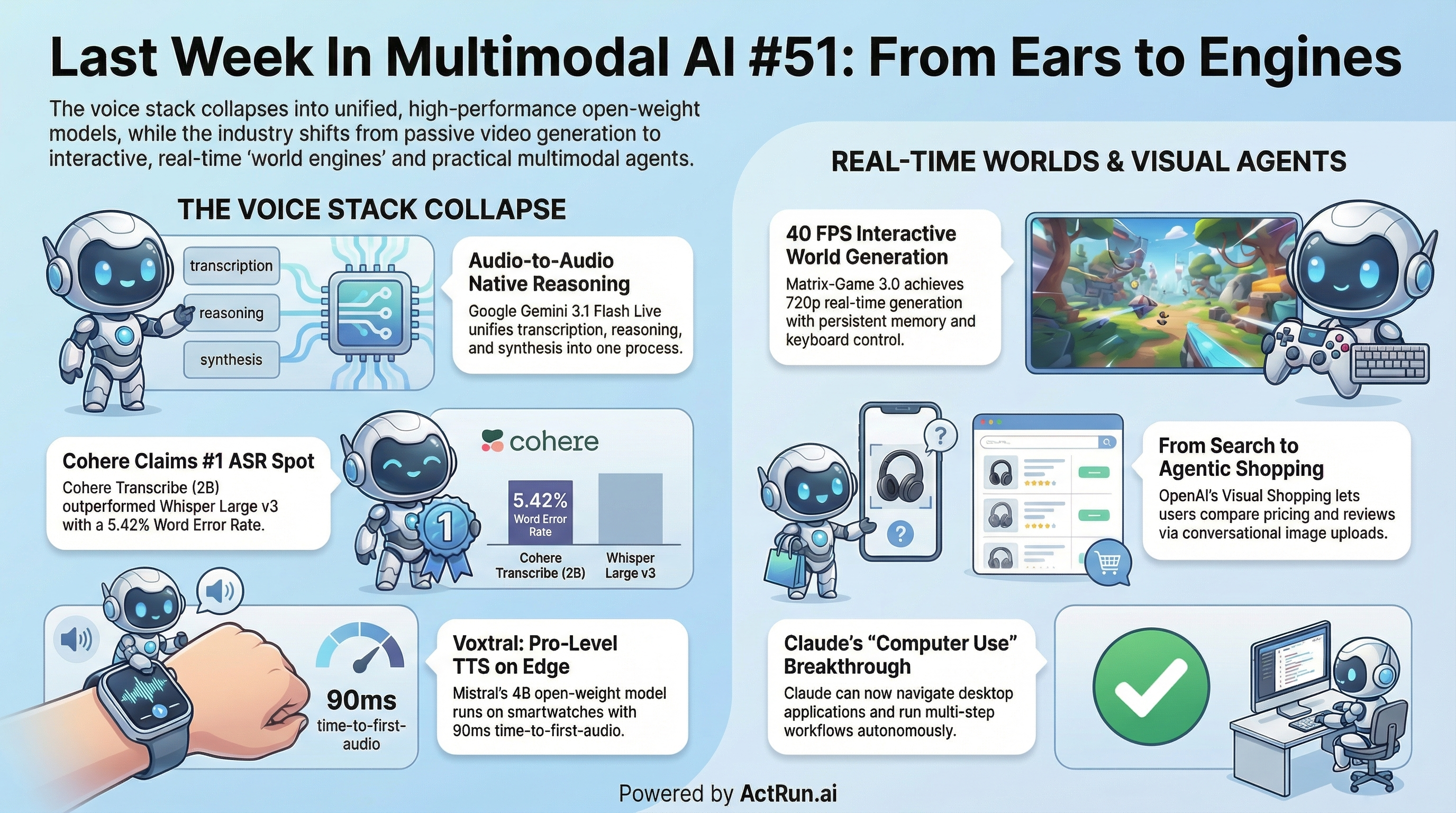

Google Gemini 3.1 Flash Live

collapses transcribe-reason-synthesize into a single audio-to-audio process. Text, image, audio, and video input with 128K context. 90+ languages, tool calling mid-session, stateful bidirectional WebSocket streaming. Model Card [https://deepmind.google/models/model-cards/gemini-3-1-flash-live/]

Mistral Voxtral TTS

* Mistral Voxtral TTS is a 4B open-weight TTS built for edge. Runs on smartwatches, phones, laptops. 9 languages, 90ms time-to-first-audio, captures speaker personality including pauses, rhythm, and emotion. Blog [https://mistral.ai/news/voxtral-tts] | Model [https://huggingface.co/mistralai/Voxtral-4B-TTS-2603]

Cohere Transcribe

Voxtral TTS is preferred to ElevenLabs Flash v2.5 in human evaluations. * Cohere Transcribe is a 2B open-source ASR that took #1 on HuggingFace’s Open ASR Leaderboard at 5.42% WER, beating Whisper Large v3, ElevenLabs Scribe v2, and IBM Granite 4.0. 14 languages. Conformer encoder, trained on 500K hours. Model [https://huggingface.co/CohereLabs/cohere-transcribe-03-2026]

LongCat-AudioDiT

* LongCat-AudioDiT is a diffusion-based TTS operating in waveform latent space. 3.5B and 1B variants, ComfyUI integration already available. 3.5B Model [https://huggingface.co/meituan-longcat/LongCat-AudioDiT-3.5B] | 1B Model [https://huggingface.co/meituan-longcat/LongCat-AudioDiT-1B] | ComfyUI Node [https://github.com/Saganaki22/ComfyUI-LongCat-AudioDIT-TTS]

Ming-Flash-Omni

* Ming-Flash-Omni (research) is a 100B MoE natively handling text, images, and speech via a thinker-talker architecture. Matches Gemini 2.5 Pro on vision-language, streams up to 10 hours of audio or 400 seconds of video. Paper [https://arxiv.org/html/2510.24821v3]

Matrix-Game 3.0

(Skywork) is a memory-augmented world model with mouse+keyboard control. 720p at 40 FPS with a 5B model, stable consistency over minute-long sequences. Trained on Unreal Engine synthetic data, GTA5 gameplay captures, and real-world video. Model [https://huggingface.co/Skywork/Matrix-Game-3.0]

DaVinci-MagiHuman

is a 15B single-stream Transformer from SII-GAIR and Sand.ai. Jointly denoises video and audio in one token sequence, no cross-attention. 5-second 256p clip in 2 seconds on one H100, 80% win rate vs Ovi 1.1, 60.9% vs LTX 2.3. 7 languages, full stack Apache 2.0. Model [https://huggingface.co/GAIR/daVinci-MagiHuman] | Demo [https://huggingface.co/spaces/SII-GAIR/daVinci-MagiHuman]

HyDRA

(Kuaishou/Kling team) tackles a specific memory gap: when dynamic subjects leave the frame and re-emerge, current models freeze or lose them. Hybrid memory acts as archivist for static backgrounds and tracker for dynamic subjects, with spatiotemporal relevance-driven retrieval. Project [https://kj-chen666.github.io/Hybrid-Memory-in-Video-World-Models/]

OpenAI Visual Shopping

turns ChatGPT into a visual commerce agent. Upload an image, get visually similar products, compare pricing and reviews side-by-side, refine conversationally. First consumer-facing multimodal agent use case that feels like it might actually stick. Post [https://openai.com/index/powering-product-discovery-in-chatgpt/] | Video [https://openai.com/index/powering-product-discovery-in-chatgpt/?video=1176364664]

Claude Computer Use

lets Claude open files, click through applications, and run multi-step workflows on your behalf in the background. General-purpose desktop agent.

Perception meets reasoning.

Two complementary papers tackle how MLLM responses interleave perception tokens (grounding visual content) and reasoning tokens (building chains). The first identifies the interdependence problem when extending RLVR to multimodal settings (Paper [https://arxiv.org/abs/2603.25077]). The second proposes Trajectory-Guided RL (TGRL) with expert reasoning trajectories and token-level reweighting to structure the transition (Paper [https://arxiv.org/abs/2603.26126])

CLIPPER

uses few-shot retrieval to select fine-tuning examples, cutting dataset size by 50% on Qwen2.5 and Llama-3.2-Vision without losing accuracy. Paper [https://arxiv.org/abs/2603.28058]

Efficient LVLM Inference

is a comprehensive survey covering visual token compression, KV-cache management, architectural design, and decoding strategies. Paper [https://arxiv.org/abs/2603.27960]

Fed-MA

proposes federated pretraining for multimodal LLMs, sharing only lightweight encodings to keep sensitive data on-device. Paper [https://arxiv.org/abs/2603.26786]

SALMUBench

benchmarks how well multimodal encoders “unlearn” specific image-text associations. Existing deletion methods either fail to forget or erase too broadly. Paper [https://arxiv.org/abs/2603.26316]

GEM

presents a native graph-based index for multi-vector retrieval. Paper [https://arxiv.org/abs/2603.20336] | GitHub [https://github.com/sigmod26gem/sigmod26gem]

Meta TRIBE v2

is a brain-predictive foundation model acting as a digital twin of neural activity. Predicts brain response to video, audio, and text across thousands of fMRI regions at 70x the resolution of prior work. Lets researchers test hypotheses without putting people in scanners. Post [https://ai.meta.com/blog/tribe-v2-brain-predictive-foundation-model/] | GitHub [https://github.com/facebookresearch/tribev2] | Model [https://huggingface.co/facebook/tribev2]

PSDesigner

automates graphic design using a human-like creative workflow. GitHub [https://github.com/FudanCVL/PSDesigner] | Project [https://henghuiding.com/PSDesigner/]

LGTM (Apple)

* LGTM (Apple) breaks the resolution scaling barrier in feed-forward 3D Gaussian Splatting. Decouples geometry from rendering resolution via compact primitives with per-primitive textures. Native 4K novel view synthesis in a single forward pass, no per-scene optimization. Project [https://yxlao.github.io/lgtm/]

Awesome AI Voice:

Shoutout to @wildmindai for their curated list of open-source models for music, TTS, ASR, and Audio SR. GitHub [https://github.com/wildminder/awesome-ai-voice]

ComfyUI VACE Video Joiner v2.5:

* ComfyUI VACE Video Joiner v2.5: Shoutout to goddess_peeler for seamless loops and reduced RAM usage on assembly. Post [https://www.reddit.com/r/StableDiffusion/comments/1s6997m/update_comfyui_vace_video_joiner_v25_seamless/?utm_source=share&utm_medium=web3x&utm_name=web3xcss&utm_term=1&utm_content=share_button]

PixelSmile:

A Qwen-Image-Edit LoRA for fine-grained facial expression control. Hugging Face Model [https://huggingface.co/PixelSmile/PixelSmile/tree/main] | Reddit Discussion [https://www.reddit.com/r/StableDiffusion/comments/1s62g0z/pixelsmile_a_qwenimageedit_lora_for_fine_grained/?utm_source=share&utm_medium=web3x&utm_name=web3xcss&utm_term=1&utm_content=share_button]

Nano Banana LoRA Dataset Generator:

* Nano Banana LoRA Dataset Generator: Shoutout to @OdinLovis for updating the generator. Post [https://x.com/odinlovis/status/2038980979256078818?s=42] | Webapp [https://lovis.io/NanoBananaLoraDatasetGenerator/] | GitHub [https://github.com/lovisodin/NanoBananaLoraDatasetGenerator]

Claude + OpenArt Worlds:

The Virtual Production Pipeline. Post [https://x.com/AmirMushich/status/2036169931612467708?s=20]