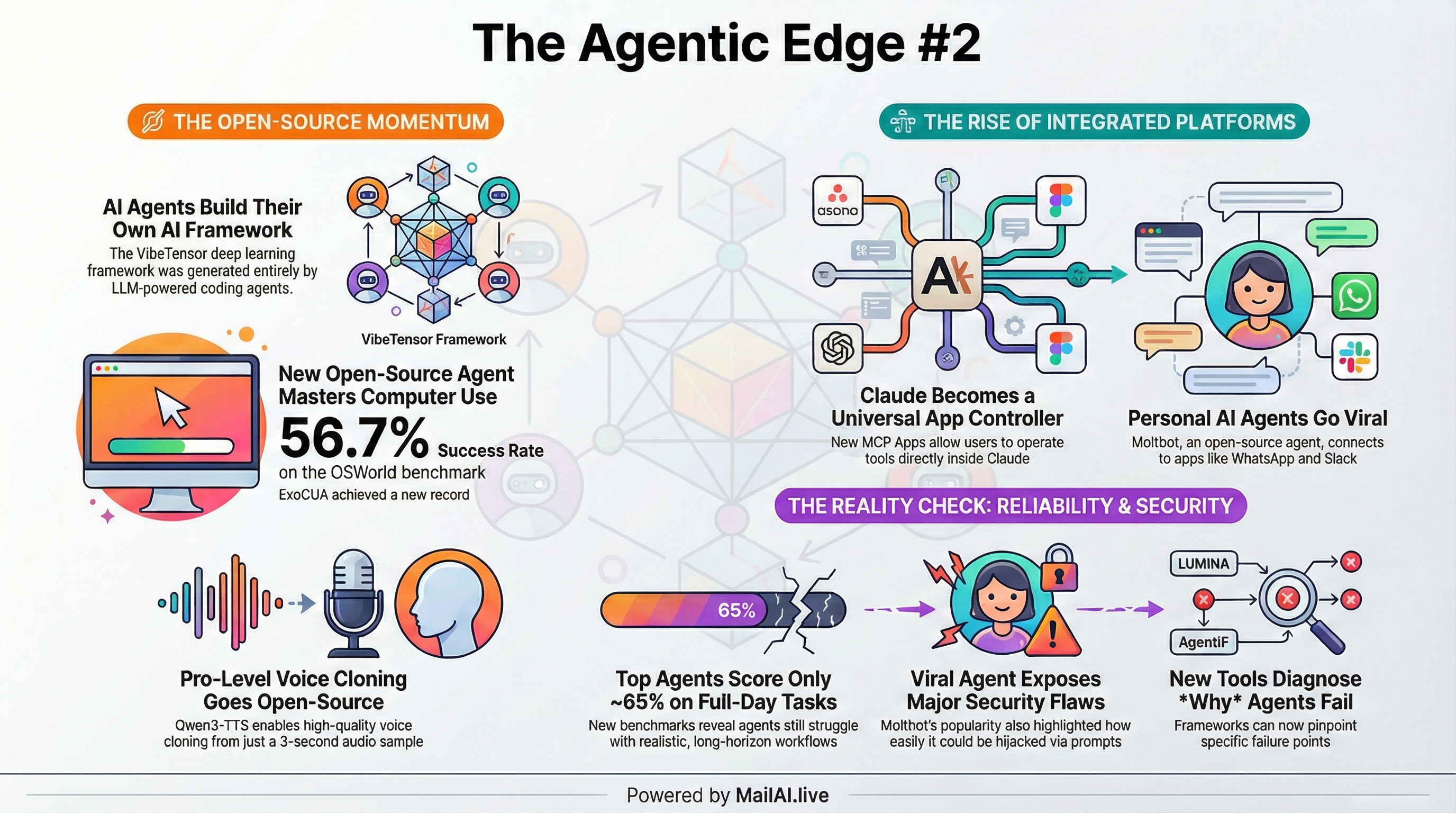

Moltbot (formerly ClawdBot) Goes Viral

* Open-source personal AI that runs on your machine and connects via WhatsApp, Telegram, Discord, or Slack * Combines persistent memory, proactive “heartbeat” check-ins, and extensible skills that it can write itself * ⚡ Curators take: Clawdbot has taught a larger group of the population just how powerful it can be to combine basic agentic loops with the right tools. * Moltbot [https://www.molt.bot/] | Discussion [https://x.com/omooretweets/status/2015618038088024164] | Major Security Issue [https://x.com/0xsammy/status/2015562918151020593] ----------------------------------------

Anthropic Launches MCP Apps

* First official extension to the Model Context Protocol: tools can now return interactive UIs instead of plain text * Users can operate Asana, Slack, Figma, Box, Canva, Amplitude, Hex, and monday.com directly inside Claude’s chat * Built on collaboration with OpenAI’s Apps SDK — the same protocol works across platforms * ⚡ Curators take: This is Anthropic positioning Claude as a cross-application interface layer, not just a chatbot. * Claude Announcement [https://x.com/claudeai/status/2015851783655194640] | MCP Apps Blog [http://blog.modelcontextprotocol.io/posts/2026-01-26-mcp-apps/] ----------------------------------------

MiniMax Agent Launches

* MiniMax’s new personal assistant combines Claude Cowork-style capabilities with agent skills and Clawdbot-like extensibility * Positions MiniMax in the emerging “personal AI OS” race alongside Anthropic and the open-source community ----------------------------------------

EvoCUA: #1 Open-Source Computer Use Agent

* Meituan’s evolutionary framework achieves 56.7% on OSWorld, surpassing previous open-source SOTA (OpenCUA-72B at 45%) and closed models like UI-TARS-2 (53.1%) * Key innovation: synthetic task generation + sandbox rollouts + learning from failures through iterative policy optimization * Available in 32B and 8B variants under Apache 2.0 * ⚡ Curators take: The evolutionary approach (generate synthetic tasks → run in sandbox → learn from failures) is proving more effective than just scaling imitation learning. This is the recipe to watch. * Model Weights [https://huggingface.co/meituan/EvoCUA-32B-20260105] | Paper [https://huggingface.co/papers/2601.15876] | GitHub [https://github.com/meituan/EvoCUA] ----------------------------------------

VIGA: Vision-as-Inverse-Graphics Agent

* Converts images into editable 3D Blender code through interleaved multimodal reasoning * Achieves +124.70% improvement on BlenderBench using analysis-by-synthesis loops with evolving contextual memory * Can reconstruct scenes from scratch using primitives or leverage 3D asset generation tools * ⚡ Curators take: This is “vision as inverse graphics” in its truest sense. It’s early days but this feels like a big “unlock” for 3D vision, games, animation, etc. * Project Page [https://fugtemypt123.github.io/VIGA-website/] | Paper [https://arxiv.org/abs/2601.11109] | Code [https://github.com/Fugtemypt123/VIGA] | Benchmark [https://huggingface.co/datasets/DietCoke4671/BlenderBench] ----------------------------------------

VibeComfy: Claude Code Meets ComfyUI

* CLI tools that let Claude Code understand and edit ComfyUI workflows * Bridges the gap between agentic coding and visual workflow tools * Announcement [https://www.reddit.com/r/comfyui/comments/1qkb7i1/vibecomfy_cli_tools_for_claude_code_to_understand/] | GitHub [https://github.com/peteromallet/VibeComfy] ----------------------------------------

AgentIF-OneDay: Full-Day Workload Benchmark

* XBench’s new benchmark tests agents on tasks representing a complete day of human work * 104 tasks across Work, Life, and Study domains with 767 granular scoring points * Tests three categories: workflow execution (follow explicit steps), latent instruction inference (learn from examples), and iterative refinement (adapt to changing requirements) * Top agents (Manus, Genspark, ChatGPT-Agent) achieve only 62-65% overall success rates * ⚡ Curators take: Finally a benchmark that tests agents on realistic, extended tasks rather than isolated capabilities. * Paper [https://github.com/xbench-ai/AgentIF-OneDay/blob/main/paper/AgentIF_OneDay_0117.pdf] | Leaderboard [https://xbench.org/agi/agentif] | GitHub [https://github.com/xbench-ai/AgentIF-OneDay] | Dataset [https://huggingface.co/datasets/xbench/AgentIF-OneDay] | Blog [https://open.substack.com/pub/xbench/p/xbench-launches-agentif-oneday?utm_campaign=post-expanded-share&utm_medium=web] ----------------------------------------

LUMINA: Oracle Counterfactual Framework

* Introduces oracle interventions to isolate why agents fail: planning errors, state tracking drift, or context management breakdowns * Procedurally generated game-like environments allow testing each capability independently * Key finding: planning interventions consistently help, but other skills’ usefulness varies by model size and environment * ⚡ Curators take: The oracle counterfactual approach is clever, instead of guessing why agents fail, give them perfect components one at a time and measure the impact. * Paper [https://arxiv.org/abs/2601.16649] ----------------------------------------

When Agents Fail to Act: Diagnostic Framework

* Evaluated 1,980 deterministic test cases across Qwen2.5, Functionary, GPT-4, and Claude 3.5/3.7 * Categorizes failures into 12 distinct error types spanning initialization, parameter handling, execution, and result interpretation * ⚡ Curators take: Essential reading for anyone building multi-agent systems, now we have a taxonomy for tool invocation failures. * Paper [https://arxiv.org/abs/2601.16280] ----------------------------------------

Controlling Long-Horizon Behavior with Explicit State Dynamics

* Addresses abrupt persona shifts during extended conversations * Maintains external Valence-Arousal-Dominance (VAD) state with momentum-based dynamics for temporal coherence * ⚡ Curators take: A practical solution to the “agent personality drift” problem that plagues long conversations. * Paper [https://arxiv.org/abs/2601.16087] ----------------------------------------

If You Want Coherence, Orchestrate a Team of Rivals

* Argues single-agent systems lack safety nets: the same entity that makes mistakes evaluates whether mistakes were made * Proposes multi-agent architectures inspired by organizational intelligence * ⚡ Curators take: Nice paper discussing how to design good multi-agent systems. Self-review shares the blind spots of the original reasoning. * Paper [https://arxiv.org/abs/2601.14351] ----------------------------------------

Communication Methods in Multi-Agent RL Survey

* Comprehensive taxonomy of communication methods in multi-agent reinforcement learning * Research converging on attention-based and graph-based methods for balancing information quality with computational constraints * Identifies two critical gaps remaining in the field * ⚡ Curators take: If you’re building multi-agent systems, this survey will save you from reinventing wheels. * Paper [https://arxiv.org/abs/2601.12886] ----------------------------------------

MemCtrl: MLLMs as Active Memory Controllers

* Uses multimodal LLMs as dynamic memory controllers for embodied agents * Enables better long-term memory management in physical environments * Paper [https://arxiv.org/abs/2601.20831] ----------------------------------------

Deep Researcher Reflect Evolve

* Advanced AI research agent using sequential planning with reflection and crossover mechanisms * Improves idea generation and validation in scientific domains * Paper [https://arxiv.org/abs/2601.20843] ----------------------------------------

Qwen3-TTS: Open-Source Voice Revolution

* New family of TTS models supporting 3-second voice cloning, voice design from natural language, and 10-language support * Dual-track architecture achieves 97ms first-packet latency for real-time applications * Outperforms MiniMax and ElevenLabs on WER and speaker similarity benchmarks * Released under Apache 2.0 with 0.6B and 1.7B variants * ⚡ Curators take: This democratizes capabilities that were previously locked behind expensive APIs. * GitHub [https://github.com/QwenLM/Qwen3-TTS] | HuggingFace Demo [https://huggingface.co/spaces/Qwen/Qwen3-TTS] ----------------------------------------

NVIDIA PersonaPlex: Full-Duplex Conversational AI

* Real-time speech-to-speech model with persona control through text prompts and voice conditioning * First to combine full-duplex conversation (listen and speak simultaneously) with customizable roles and voices * Handles interruptions, backchannels, and authentic conversational rhythm * Built on Moshi architecture, trained on Fisher corpus + synthetic customer service data * ⚡ Curators take: This breaks the tradeoff between natural conversation and customization that’s plagued conversational AI. * GitHub [https://github.com/NVIDIA/personaplex] | Project Page [https://research.nvidia.com/labs/adlr/personaplex/] ----------------------------------------

LingBot-VLA: Pragmatic VLA Foundation Model

* First empirical evidence that VLA models scale with massive real-world robot data * Trained on ~20,000 hours of data from 9 dual-arm robot configurations * Achieves 261 samples/second/GPU throughput (1.5-2.8x speedup over existing codebases) * ⚡ Curators take: The scaling laws for vision-language-action models are finally being validated with real-world deployment data. * Paper [https://huggingface.co/papers/2601.18692] | Project Page [https://technology.robbyant.com/lingbot-vla] | Models [https://huggingface.co/collections/robbyant/lingbot-vla] ----------------------------------------

VibeTensor: Deep Learning Stack Built by AI Agents

* Open-source deep learning framework fully generated by LLM-powered coding agents under high-level human guidance * Successfully ran end-to-end training on CV and language modeling workloads * ⚡ Curators take: AI agents just built an entire deep learning framework from scratch. This demonstrates coding agents can generate coherent system software crossing abstraction boundaries, validated entirely through automated builds and tests. * Paper [https://arxiv.org/abs/2601.16238] | GitHub [https://github.com/NVlabs/vibetensor] ----------------------------------------

Gemini 3 Flash

* Google brings frontier-level reasoning to cost-sensitive deployments * Achieves Pro-grade benchmarks at Flash costs * ⚡ Curators take: While Gemini 3 Pro and Antigravity were announced late 2025, the Flash release this week makes frontier reasoning accessible for production workloads. ----------------------------------------

Antigravity Platform Multi-Model Support

* Google’s agentic IDE now supports 7 models: Gemini 3 Pro, Gemini 3 Flash, Gemini 3 Deep Think, Claude Sonnet 4.5, and GPT-OSS-120B * ⚡ Curators take: The IDE wars are heating up as model-agnostic tooling becomes table stakes. ----------------------------------------

Remotion Skills + MCP

* Remotion releases agent skills framework with MCP integration * Enables AI agents to work with programmatic video generation * GitHub [https://github.com/remotion-dev/skills] | Announcement [https://x.com/Remotion/status/2013626968386765291] ----------------------------------------

Capturing Expert Knowledge for AI Tools

* Research showing experts have two types of knowledge: explicit procedural rules (translate to code) and tacit design principles (embed in LLM prompts) * Applied to visualization creation, reducing non-expert errors while preserving expert efficiency * ⚡ Curators take: Critical domain knowledge resides with few specialists, creating bottlenecks. This paper provides a framework for capturing that knowledge systematically. * Paper [https://arxiv.org/abs/2601.15153] ---------------------------------------- That’s a wrap for The Agentic Edge #2! This space moves fast so we are going to miss important releases. Please let us know if we miss anything or if you know how we can improve this roundup. The Living Edge is a reader-supported publication. To receive new posts and support my work, consider becoming a free or paid subscriber.