MJ1

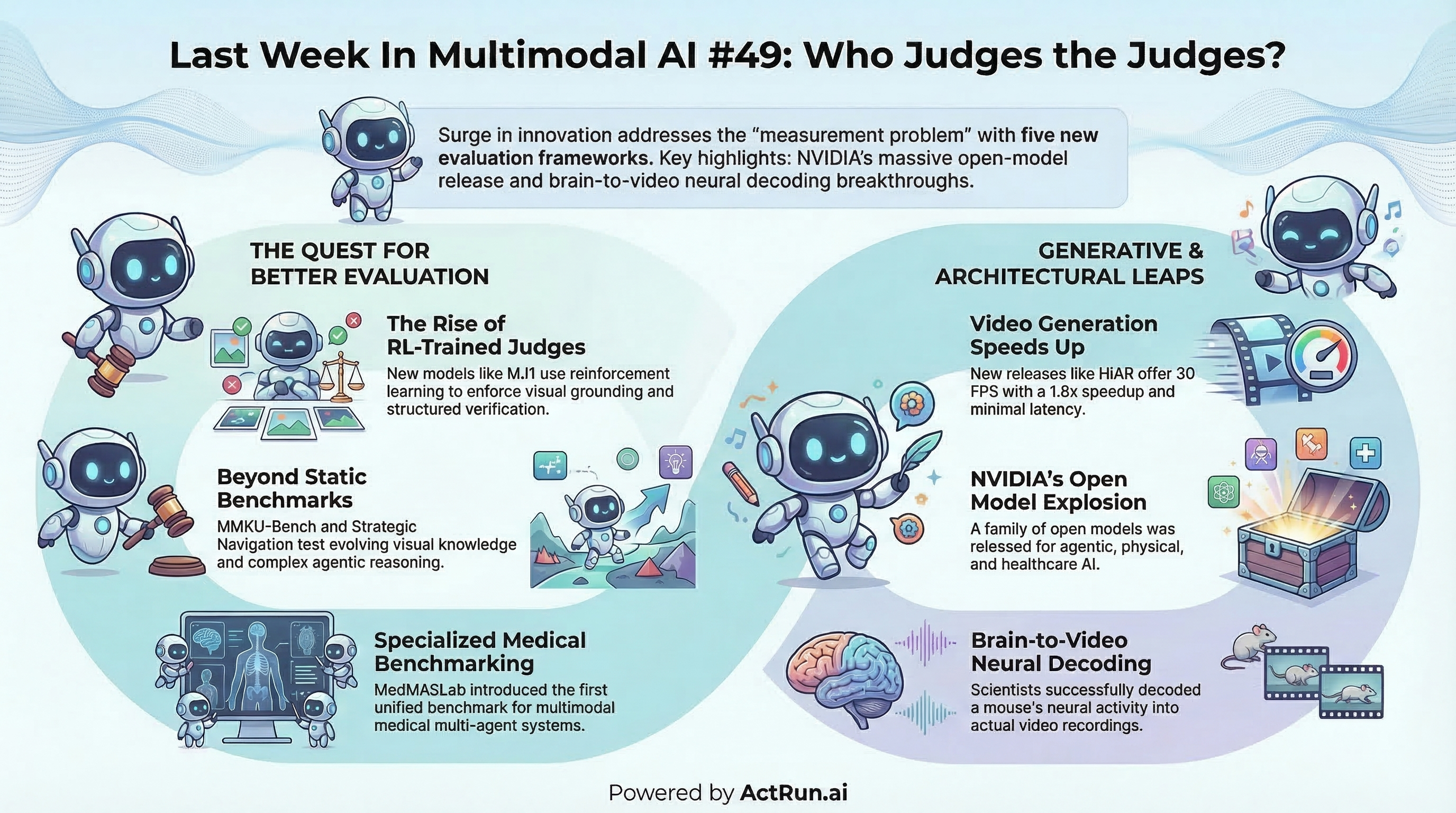

(paper [https://arxiv.org/abs/2603.07990]) — RL-trained multimodal judge, 3B params, enforces visual grounding via structured verification chain. 77.0% on Multimodal RewardBench 2, beating Gemini-3-Pro

MT-RL-Judge

(paper [https://arxiv.org/abs/2603.11665]) — jointly optimizes multimodal judges across multiple tasks with RL. Breaks past the single-task generalization bottleneck

MMKU-Bench

(paper [https://arxiv.org/abs/2603.15117]) — tests whether multimodal LLMs handle evolving visual knowledge, not just static facts

Strategic Navigation or Stochastic Search?

(paper [https://arxiv.org/abs/2603.12180], code [https://github.com/Snowflake-Labs/MADQA], data [https://huggingface.co/datasets/OxRML/MADQA]) — 2,250 human-authored questions across 800 heterogeneous PDFs. Tests six core properties: extractive, multi-hop, closed-world, grounded, agentic, visual

MedMASLab

* MedMASLab (paper [https://arxiv.org/abs/2603.09909]) — first unified benchmark for multimodal medical multi-agent systems. 11 architectures, 24 medical modalities

MatAnyone 2

(demo [https://huggingface.co/spaces/PeiqingYang/MatAnyone], code [https://github.com/pq-yang/MatAnyone2], project [https://pq-yang.github.io/projects/MatAnyone2/]) — cuts out moving objects from video using a built-in evaluator that judges its own edge quality. Learns from millions of real-world frames

HiAR

(project [https://jacky-hate.github.io/HiAR/]) — Tencent’s scalable long video gen. 30 FPS, 1.8x speedup, 0.30s latency

ViFeEdit

(code [https://github.com/Lexie-YU/ViFeEdit]) — professional video editing from 2D image pairs only. Wan2.1/2.2-T2V-A14B + LoRA. 100% object addition, 91.5% color accuracy

FlashMotion

(project [https://quanhaol.github.io/flashmotion-site/], weights [https://huggingface.co/quanhaol/FlashMotion]) — few-step controllable gen on Wan2.2-TI2V. Multi-object box/mask guidance, 50x speedup, camera motion support

Training-Free Refinement

(code [https://github.com/HKUST-LongGroup/Coarse-guided-Gen], paper [https://arxiv.org/pdf/2603.12057]) — zero-shot cinematic camera control, super-resolution, and inpainting for Wan2.2 and CogVideoX. No additional training

Zero-Shot Identity-Driven AV Synthesis

* Zero-Shot Identity-Driven AV Synthesis (project [https://id-lora.github.io/], weights [https://huggingface.co/AviadDahan/ID-LoRA-TalkVid]) — based on LTX-2, 24% higher speaker similarity than Kling. Unified prompt control with native environment sound sync

MoDA (Mixture-of-Depths Attention)

(paper [https://arxiv.org/abs/2603.15619]) — lets queries attend to historical depth key-value pairs, resolving information dilution in deep LLMs. Near FlashAttention-2 efficiency

CoCo

(code [https://github.com/micky-li-hd/CoCo]) — teaches AI to perform its own image-to-image translations for complex visual compositions

Visual Words Meet BM25

(paper [https://arxiv.org/abs/2603.05781]) — applies Okapi BM25 scoring to sparse “visual words” extracted via SAE on ViT patch features

Uber Eats Semantic Retrieval

(paper [https://arxiv.org/abs/2603.06586]) — production system: unified two-tower on gte-Qwen2-1.5B replacing fragmented BERT/MiniLM models and lexical search

STRUCTUREDAGENT

(paper [https://arxiv.org/abs/2603.05294]) — hierarchical planning for web tasks via dynamic AND/OR trees. LLM invoked only for local operations

NVIDIA Open Model Families

(announcement [https://investor.nvidia.com/news/press-release-details/2026/NVIDIA-Expands-Open-Model-Families-to-Power-the-Next-Wave-of-Agentic-Physical-and-Healthcare-AI/default.aspx]) — multimodal, omni-understanding, and safety models for agentic, physical, and healthcare AI

GlyphPrinter

(project [https://henghuiding.com/GlyphPrinter/], code [https://github.com/FudanCVL/GlyphPrinter], weights [https://huggingface.co/FudanCVL/GlyphPrinter]) — glyph-accurate multilingual text rendering for t2i. Posters, menus, specific text styles

Foundation 1

* Foundation 1 (post [https://x.com/RoyalCities/status/2033652117643395428?s=20], weights [https://huggingface.co/RoyalCities/Foundation-1]) — text-to-sample model for music production. 7 GB VRAM

SWM (Seoul World Model)

(project [https://seoul-world-model.github.io/]) — city-scale world simulation grounded in real-world layouts. Built on Cosmos-Predict2.5-2B

InSpatio-WorldFM

(paper [https://arxiv.org/abs/2603.11911], code [https://github.com/inspatio/worldfm]) — open-source real-time generative frame model for spatial and temporal generation

Anima Preview 2

(weights [https://huggingface.co/circlestone-labs/Anima/tree/main/split_files/diffusion_models]) — latest preview of the Anima diffusion models

Google Workspace CLI

* Google Workspace CLI (code [https://github.com/googleworkspace/cli/blob/main/skills/recipe-schedule-recurring-event/SKILL.md]) — dynamically built from Google Discovery Service. Drive, Gmail, Calendar, Sheets, Docs, Chat, Admin + AI agent skills

Mouse Neural Decoding to Video

(paper [https://elifesciences.org/articles/105081]) — recorded neural activity from a mouse brain, decoded it back into video. Actual decoding, not hallucination

LTX-2.3 Colorizer LoRA

(weights [https://huggingface.co/DoctorDiffusion/LTX-2.3-IC-LoRA-Colorizer]) — colorizes B&W footage via IC-LoRA. Prompt-based control, detail-preserving blending

Visual Prompt Builder

* Visual Prompt Builder by TheGopherBro (reddit [https://www.reddit.com/r/StableDiffusion/comments/1rtz6jl/i_built_a_visual_prompt_builder_for_ai/]) — control camera, lens, lighting, style without writing complex prompts

Z-Image Base Inpainting

* Z-Image Base Inpainting by nsfwVariant (reddit [https://www.reddit.com/r/StableDiffusion/comments/1rrqrpf/so_turns_out_zimage_base_is_really_good_at/?utm_source=share&utm_medium=web3x&utm_name=web3xcss&utm_term=1&utm_content=share_button]) — highlighted for exceptional inpainting realism

Nano Banana 2 Developer Guide

* Nano Banana 2 Developer Guide by @_philschmid [https://x.com/_philschmid/status/2033573084297331163?s=20] — four use cases with Gemini Interactions API: travel posters, web-grounded images, real photo retrieval, compositing

‘Youtube Poop’ by Claude Opus 4.6

‘Youtube Poop’ by Claude Opus 4.6 by @josephdviviano [https://x.com/josephdviviano/status/2031196768424132881?s=20]