T5Gemma 2

Google released T5Gemma 2, the next generation of encoder-decoder models. The architecture combines bidirectional understanding with flexible text generation.

Perception Encoder Audiovisual (PE-AV)

Meta released PE-AV, the technical engine behind SAM Audio’s audio separation capabilities. The model processes both visual and audio information to isolate individual sound sources. https://substackcdn.com/image/fetch/$s_!uJMQ!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fccc9bdf8-c72e-4558-b456-bbd0f3142122_2698x588.png

MiMo-V2-Flash

Xiaomi released MiMo-V2-Flash, optimized for speed in real-time applications. The model sacrifices some accuracy for dramatic latency reductions.

TurboDiffusion

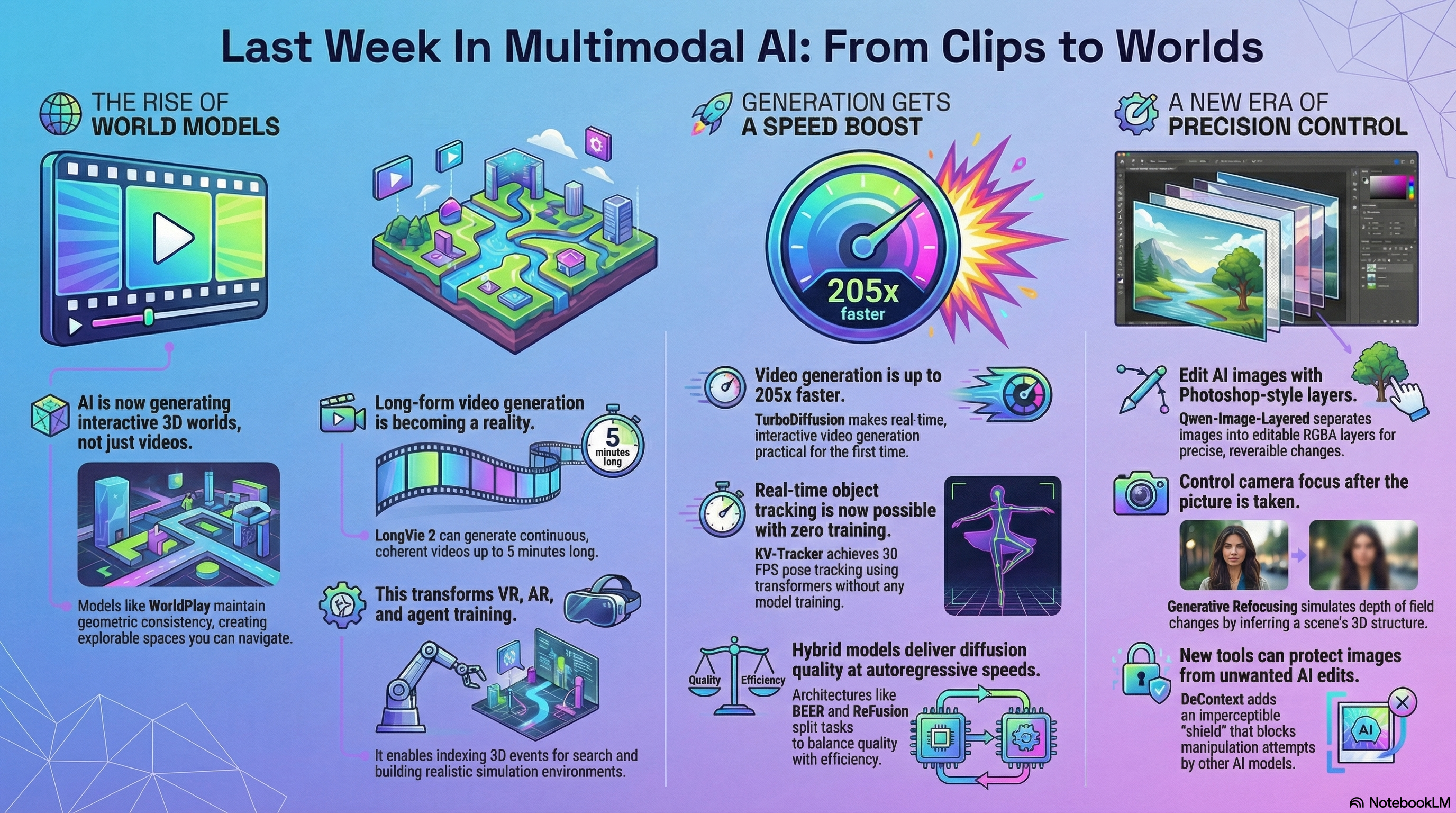

TurboDiffusion accelerates video diffusion models by 100-205 times through architectural optimizations. The speedup comes from reducing redundant computations without quality loss.

Qwen-Image-Layered

Qwen-Image-Layered decomposes images into multiple RGBA layers that can be independently edited. Each layer isolates specific semantic or structural components while maintaining visual coherence.

N3D-VLM

N3D-VLM grounds spatial reasoning in native 3D representations rather than 2D projections. The model understands depth, distance, and spatial relationships directly.

MemFlow

MemFlow maintains adaptive memory for long streaming videos, deciding which frames to remember and which to discard. The system balances memory efficiency with video understanding quality.

WorldPlay

Tencent’s WorldPlay generates interactive 3D worlds with long-term geometric consistency. The model maintains spatial relationships across extended video sequences, allowing persistent interaction with generated environments.

LongVie 2

LongVie 2 generates 5-minute continuous videos with controllable elements and consistent geometry. The model handles multiple modalities and maintains coherence across thousands of frames.

FoundationMotion

FoundationMotion labels and analyzes spatial movement in videos automatically. The system identifies motion patterns and spatial trajectories without manual annotation.

Generative Refocusing

Generative Refocusing controls depth of field in existing images, simulating camera focus changes after capture. The model infers 3D scene structure to generate realistic blur patterns.

StereoPilot

StereoPilot converts 2D videos to stereo 3D through learned generative priors. The system produces depth-aware conversions suitable for VR headsets.

KV-Tracker: Real-Time Pose Tracking with Transformers

KV-Tracker achieves real-time tracking at 30 FPS without any training. The approach uses transformer key-value pairs to track objects and scenes across frames.

DeContext: Protecting Images from Unwanted In-Context Edits

DeContext adds imperceptible perturbations that prevent DiT models like FLUX and Qwen-Image from making unwanted edits. The protection preserves visual quality while blocking manipulation attempts.

EgoX: Generate Immersive First-Person Video from Any Third-Person Clip

EgoX transforms third-person videos into realistic first-person perspectives using video diffusion. The framework from KAIST AI and Seoul National University maintains spatial and temporal coherence during the transformation.

MMGR: Multi-Modal Generative Reasoning

MMGR benchmarks reveal systematic reasoning failures in GPT-4o and other leading multimodal models. The evaluation exposes gaps between perception and logical inference. https://substackcdn.com/image/fetch/$s_!c2F6!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F95443f95-0cd1-4046-83cf-153c58be99ae_2198x980.jpegMMGR Overview

KlingAvatar 2.0 and Kling-Omni Technical Report

KlingAvatar 2.0 generates high-fidelity avatar videos through a spatio-temporal cascade framework. Kling-Omni provides a generalist framework for multimodal video generation with a Co-Reasoning Director that fuses instructions across modalities.

Step-GUI Technical Report

Step-GUI introduces a self-evolving pipeline for GUI automation. The system reaches state-of-the-art on AndroidWorld and OSWorld benchmarks through iterative improvement.

ReFusion

ReFusion combines diffusion models with parallel autoregressive decoding. The architecture bridges autoregressive models and diffusion models for faster text generation.

DEER

DEER uses diffusion models to draft content and autoregressive models to verify it. The two-stage approach balances generation quality with computational efficiency.

IC-Effect

IC-Effect applies video effects through in-context learning without fine-tuning. The system learns effect patterns from examples and applies them to new videos.

Flow Map Trajectory Tilting

Flow Map Trajectory Tilting improves diffusion model outputs at test time using flow maps. The technique adjusts generation trajectories without retraining.