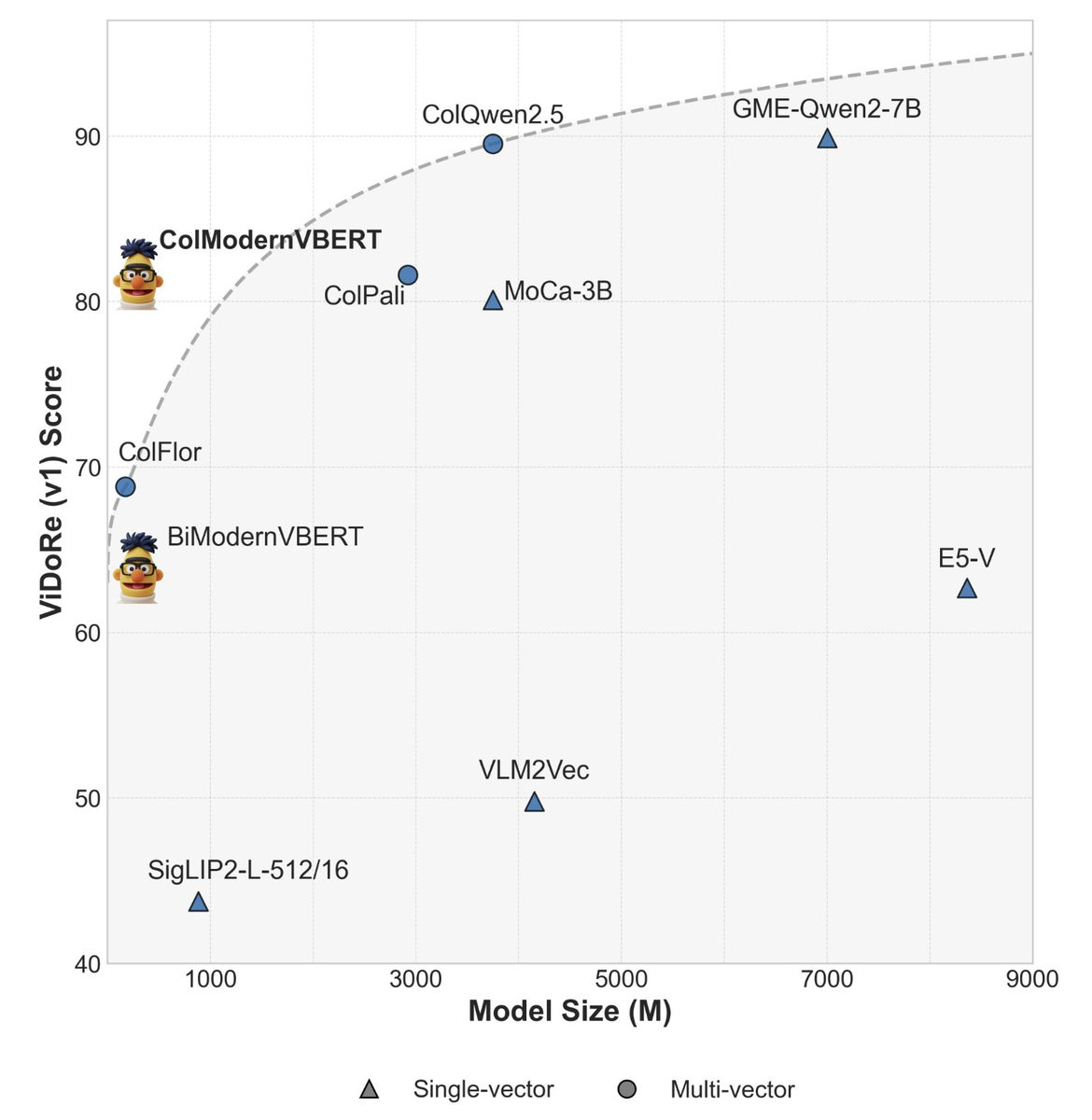

ModernVBERT: Towards Smaller Visual Document Retrievers

EPFL researchers built a 250M parameter model that matches systems 10x larger on document retrieval. They discovered bidirectional attention beats causal attention by +10.6 nDCG@5 for retrieval, and that mixing text-only pairs with image-text during training fixes data scarcity through cross-modal transfer. https://substackcdn.com/image/fetch/$s_!De-2!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F753a8eb5-577f-459a-a49c-58383bc5dc18_1170x1200.jpeg

DocPruner: A Storage-Efficient Framework for Multi-Vector Visual Document Retrieval

DocPruner slashes storage for visual document retrieval by 50-60% without hurting performance. The system analyzes attention scores to identify which patches matter, then adapts pruning intensity per document—aggressively cutting sparse pages while preserving dense ones. https://substackcdn.com/image/fetch/$s_!tAra!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fc2e4ed74-cc43-4184-8fbe-ca406aef0a62_2000x475.pngThe illustration of comparison between OCR-based (a) & LVLM-based (b) paradigms for VDR, and DocPruner (c), a novel framework to adaptively prune the patch-level embeddings for diverse document types.

LEAML: Label-Efficient Adaptation to Out-of-Distribution Visual Tasks

LEAML adapts multimodal models to specialized domains like medical imaging using minimal labeled data plus unlabeled samples. The framework turns abundant unlabeled visual content into useful training signal when expert annotations are expensive or impossible to obtain. https://substackcdn.com/image/fetch/$s_!U2Cn!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fd044006e-35ff-4c6d-8eec-9ff5ccf050da_2000x751.pngOverview of the proposed two-stage LEAML framework for OOD VQA adaptation. In Pseudo QA Generation, the QA Generator is trained using a small set of labeled question-answer pairs and then used to generate pseudo QA pairs for a large collection of unlabeled images. In OOD VQA Finetuning, the VQA model is fine-tuned with both the original labeled data and the produced pseudo QA pairs of unlabeled data, enabling label-efficient adaptation to out-of-distribution visual-question answering.

Coevolutionary Continuous Discrete Diffusion

CCDD enables joint generation across continuous (images, audio) and discrete (text) modalities in one unified process. The coevolutionary approach lets models reason across different representation types simultaneously rather than processing them separately.

GraphSearch: An Agentic Deep Searching Workflow

DataArc’s GraphSearch fixes GraphRAG’s shallow retrieval problem through six-stage deep searching: decomposition, refinement, grounding, drafting, verification, and expansion. The dual-channel approach queries both text chunks and graph structure simultaneously, beating single-round GraphRAG on all benchmarks. https://substackcdn.com/image/fetch/$s_!Xajz!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F2df2869e-c07b-4183-8c0b-7cbbc71f7520_2000x480.pngComparison of using graph data only, text data only, or all data as commonly adopted in GraphRAG approaches. The metric is SubEM. The contribution of retrieved graph data is marginal.

Other New Notable Research:

Fathom-DeepResearch delivers evidence-based web investigation with two 4B models achieving SOTA among open-weights through DuetQA dataset and RAPO optimization.

OpenAI Sora 2

Sora 2 ships with rightsholder controls and revenue sharing. Sam Altman says users are generating way more content than expected, so they’re building opt-in controls where creators get paid when their characters appear in user-generated content—basically “interactive fan fiction” that pays the original creators.

Anthropic Claude Sonnet 4.5

Claude Sonnet 4.5 breaks records: 77.2% on SWE-bench, 61.4% on OSWorld, and can code for 30+ hours straight. Ships with checkpoints in Claude Code, VS Code extension, memory tools for longer agent runs, and the Claude Agent SDK powering it all.

Alibaba Qwen3-VL-30B-A3B-Instruct

Alibaba’s Qwen3-VL uses just 3B active parameters to match GPT-5-Mini and Claude4-Sonnet on STEM, VQA, OCR, video, and agent tasks. Available in standard and FP8 versions, plus a massive 235B-A22B variant for maximum capability.

Tencent HunyuanImage-3.0

HunyuanImage-3.0 improves text-to-image generation across the board: better prompt understanding, higher quality, more consistent styles. Handles complex scenes, detailed characters, and maintains coherence across artistic styles.

Ovi: Twin Backbone Cross-Modal Fusion

Ovi generates synchronized audio and video simultaneously using twin backbone architecture. Creates 5-second 720×720 videos at 24 FPS with matched audio, supporting 9:16, 16:9, and 1:1 aspect ratios from text or text+image inputs.

Other Notable New Tools:

Code2Video generates educational videos from code for automated programming tutorials.